Greg Sutcliffe is a long-time member and now community lead of the Foreman community. Foreman is a lifecycle management tool for physical and virtual servers. He's been studying how the real-world application of community metrics gives insight into its effectiveness and discovering the gap that exists between the ideal and the practical. He shares what insights he's found behind the numbers and how he is using them to help the community grow.

In this interview, Sutcliffe spoke with me about the metrics they are using, how they relate to the community's goals, and which ones work best for them. He also talks about his favorite tooling and advice for other community managers looking to up their metrics game.

James Falkner: Thanks for doing this interview! Can you tell us a bit about yourself, the Foreman community, and your role in it?

Greg Sutcliffe: Thanks for having me!

Foreman's been around for over seven years now, and I've been involved with the project for about six years. I've been a user and community member, contributor, paid developer, and now the community lead, so I like to think that gives me a broad perspective.

The community is similar to many projects; it's split broadly into the users and the developers. We obviously get huge value from the people who choose to spend their time contributing code to the project, but I do feel that it's easy to overlook the users who contribute in other ways. Our community is very active in the testing and reporting areas (we get a lot of feedback and bug reports during Release Candidate phases of new releases, for example), and we have full localization support, so there's contribution there, as well as in the docs and many other areas that aren't strictly coding.

My role is to support the community and help it to grow, which is a fairly broad aim, but the main thing I'm really interested in is growing the user base. In general, I don't think you can directly grow the developer base anyway, so the aim is to bring in more contributions of all the kinds, as I've just listed, as well as taking over the world (as we all want to do).

To do that, I focus on things like content creation (e.g., we do regular demos, deep dives, and case studies on YouTube), event management, organizing speakers for CFPs, and so on. The challenge is getting the word out to the people who haven't heard of us yet—even after seven years, I still hear, "Wow, this will solve a ton of my problems," when I do demos.

Metrics don't really help there, except to know that the community is growing (but it probably would have anyway). However, another part of my role is to demonstrate the value of community to those who aren't convinced yet, and that's where metrics really shine. Showing the amount of value you can derive from a community can really help you when lobbying for a community budget.

JF: To what extent are you using metrics (both qualitative and quantitative) in the Foreman community?

GS: Good question. At the moment, we do a lot of collection, but no real visualization. This is really because I'm a terrible frontend coder, so while I have a giant DB of data, I don't really have anything to graph it with. That's a sore point for me, as I'd really like a nice dashboard that I can show to the community.

I do, however, run some point-in-time stuff and month-to-month comparisons in our regular sprint demos (which are public, so the community gets an update). This is not ideal because no one can verify my claims themselves, but it's better than nothing.

In terms of what metrics we have, there's the usual stuff (Redmine bugs opened/closed, GitHub PRs opened/closed, package downloads), which is fairly easy to get but doesn't have much value other than showing the "community is doing stuff." I'm also starting to run queries that tell me things like "percentage of bugs opened by the community versus Red Hat devs" and "percentage of mailing list first responses not from Red Hat devs." These numbers are a start on that insight into the value provided by the community, and I have plans for more such metrics.

I'll add a note, though, a colleague once said, "You are what you measure." I try to remember that given the lack of sufficiently specific metrics (I think you call them second-order metrics) so far, and I'm trying to not get tied to them. When we have better metrics, we can use them more forcefully.

JF: Which specific metrics or metrics types do you think benefits you as community manager the most?

GS: I guess this ties directly into the two halves of my role above. The first (community growth) really needs metrics to do with stability (bugs), user retention, community satisfaction, etc. We do a yearly community survey, which really helps here, but there's more we can glean from the data we have.

The second (value created) is specific to the fact that we're a Red Hat project with a downstream product (Satellite 6, Red Hat's system management product). There's work that will get done one way or another, but if the community does it, then Red Hat now has resources to spare that can be used to further the project in other ways—it's a positive circle. Metrics like bugs found (or even closed!) before our QE team ever sees them, or how much the community supports itself (instead of devs spending time in on support) are really useful here.

JF: And similarly, which metrics do you think community members would benefit from seeing and understanding?

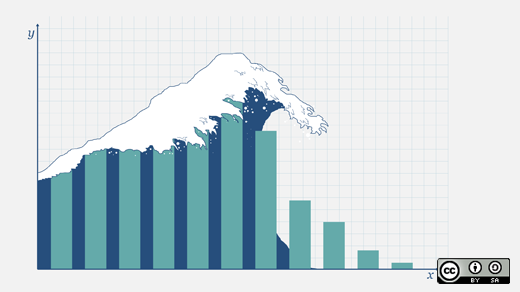

GS: Community growth and activity graphs like PR-time-to-commit or bugs-time-to-close help us to understand if we are going in the right direction or if we need help in some area of the project.

The value created may not be of huge interest outside Red Hat, but the same graphs can be used to tell another story. Knowing that a significant proportion of the bugs raised and fixed, the mailing list support, the candidate testing, etc., comes from the community helps us to know that we're a self-sustaining community, and we can have confidence in the future of the project.

JF: What are your favorite tools or frameworks for analyzing and reporting project metrics?

GS: For collection, I'm using the old MetricsGrimoire tools (bicho for Redmine, pullpo for GitHub) along with a Google Groups webcrawler because Groups has no API (sigh). There's also some homebrew stuff for imported data that Groups data into the DB and for parsing Apache logs from our Yum/Apt servers.

As I mentioned, I don't currently have much visualization on top of that. I was playing with Redash.io for a bit, but I didn't get too far before getting sucked into other things. I'd love to learn about alternatives for parsing data (especially data in a MySQL or PostgreSQL db, because that's what I have).

JF: Describe the ideal metric for the Foreman community, regardless of the time it might take to gather and report it.

GS: I have a few that are hard or impossible to get.

First is the impossible metric—why people leave. Once someone leaves the community, it's pretty much impossible to know why or even that they're gone. They're not going to see your blogs, your surveys, etc., so you can't reach them. But that knowledge of their issues is probably relevant to improving your project, especially if you get the same thing from enough people.

Second is the user base itself. As with many open source projects, we simply don't know who uses our software. There's no phone-home data collection in Foreman, so unless you self-select by communicating with us, we'll never know. I'd love to know things like where our users are, so that I know where to focus, say, meetup programs.

JF: What advice would you give to a community manager who is just starting out and is interested in knowing more through metrics?

GS: I find this a fascinating field, and I wish I had more time to spend on it. I'd definitely say that you want to start with your goals first. There's a ton of things you could measure, but getting the data may take time and effort that is way higher than the value of the data. This is a mistake I learned the hard way, and I'm still rectifying.

JF: Anything else you'd like our readers to know?

GS: If you ever have constructive criticism for any project, send it. I can't speak for other community leads, but I love hearing from and talking to users, even the highly critical ones. It means they care, and it helps us to do better as a project.

If you ever need to do that for Foreman, I'm @gwmngilfen in most places.

Comments are closed.