Twitter has shifted its way of thinking about how to launch a new service thanks to the Apache Mesos project, an open source technology that brings together multiple servers into a shared pool of resources. It's an operating system for the data center.

"When is the last time you've seen the fail whale on Twitter?" said Chris Aniszczyk, Head of Open Source at Twitter.

I caught up with Chris how the Mesos project is helping them spend less time prototyping and more energy focusing on how a new service will consume company resources. It's proving to be a real cost savings and helping to reduce downtime. Last time I talked with Chris, we looked at the open source technology behind Twitter. In this interview, learn more about the Mesos project, why Twitter uses it so heavily, and how to get involved in the project.

For an in-person experience, check out MesosCon later this month for presentations from experts in the community, including Twitter and Google. The conference is co-located with LinuxCon and in addition to talks and sessions, there will be a full day hackathon to get your hands on the code.

opensource.com

For the non-technical, what is the Apache Mesos project?

Mesos was originally born out of a research project at Berkeley AMPLab and today it powers the infrastructure of large companies like Airbnb, eBay, Netflix and Twitter (you can read about it more in this Wired article). Furthermore, it's a mature open source project developed by a community of people at the Apache Foundation (follow @ApacheMesos). The Mesos community is getting ready to host its first conference called MesosCon in late August.

As for what it does, on a high level you can think about Mesos as a highly-available and fault-tolerant operating system kernel for your public or private cloud (in data centers). Mesos combines your servers into one shared pool (large computer) of intelligently allocated resources which any application can efficiently use, reducing complexity and improving efficiency.

It's really as simple as that, you can just think of Mesos taking many computers in data centers and combining them into one large computer... so you're able to program for the data center just like you would program for your laptop.

For the technical person, how does Apache Mesos help run applications on a shared pool of servers?

For those that are interested, they can read the original Mesos research paper [PDF], but to start off with a simple example, inside the typical cloud there are many computers running a variety of workloads like big data processing via Hadoop or Spark... or even running the website you're currently browsing.

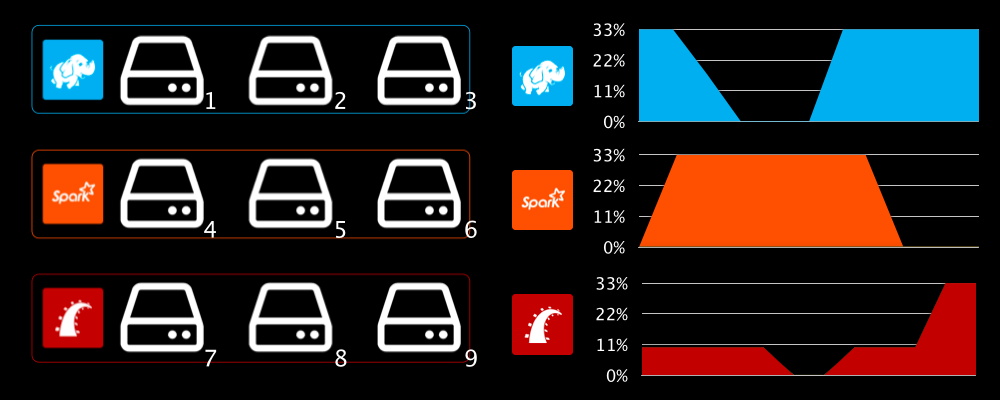

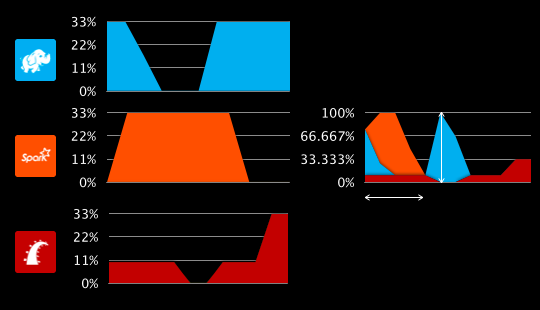

Typically, these workloads tend to run on statically partitioned computers which are only utilized when their respective workloads are executed and commonly results in idle resources (which could be used by other applications). Mesos allows resource-sharing between applications (using Mesos frameworks) which increases both throughput and utilization. As an example below, when your Hadoop jobs are done processing, other applications can take advantage of the idle resources.

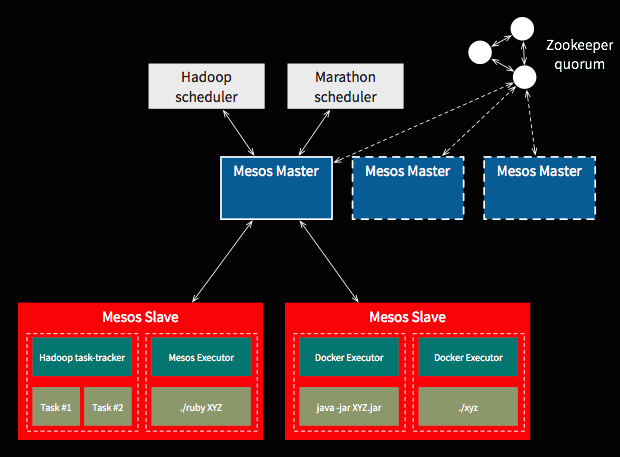

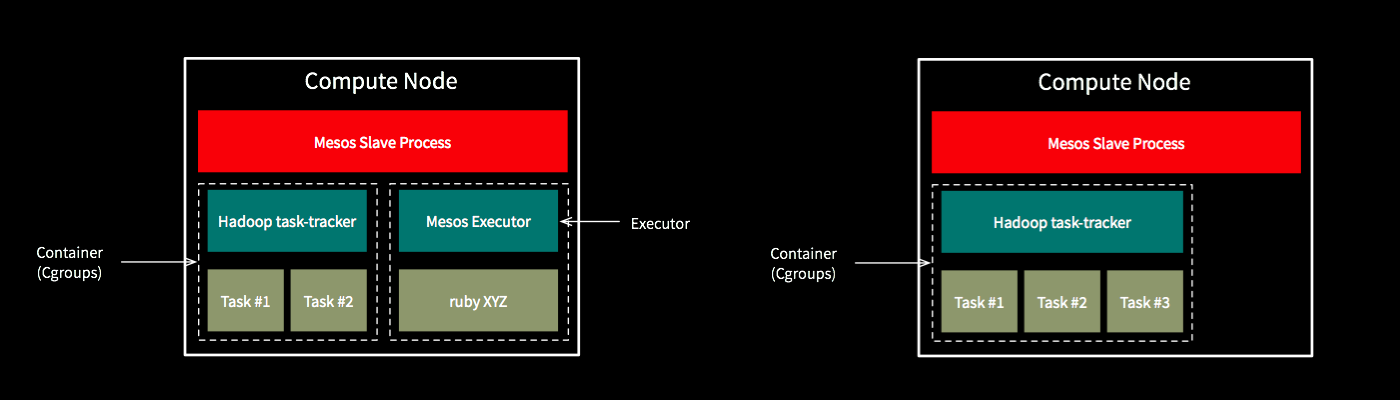

How does this actually work at a low level? In essence, Mesos consists of master and slave nodes which assist an application in running tasks in a cluster. For high availability purposes, you usually have multiple masters controlled by Apache Zookeeper, which handles the coordination of leader election. Tasks are the unit of execution in Mesos and the master node schedules those tasks to run on a slaves' available resources.

A Mesos slave relies on Linux containers via cgroups for resource isolation (the dotted line in the figure above) which includes resource types being offered like CPU bounds, RAM, disk space, I/O network controller, and more. You can even use Docker containers as the packages that you run and orchestrate on top of Mesos. Furthermore, because Mesos tracks and enforces resource constraints, it can dynamically grow the size of the respective slaves depending on when tasks complete and the needs of the cluster.

Technically, Mesos uses a two-level scheduling model where Mesos delegates the task of scheduling to Mesos frameworks. Mesos decides which framework gets how much resources and makes resource offers to frameworks. Frameworks can choose to accept or decline the offer and optimize on how their subtasks are given resources. For example, framework like Hadoop can use it to accept offers based on data locality.

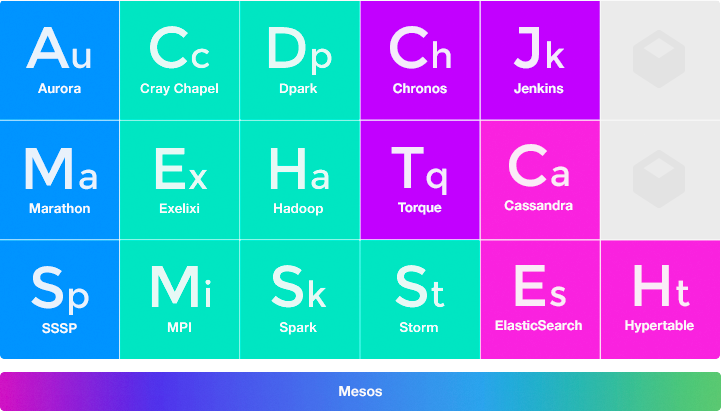

To make your life easier, there's a growing list of Mesos frameworks like Marathon, Chronos, and Aurora you can take advantage of so you don't have to write your own:

How does Twitter use Apache Mesos in their data centers?

As of today, Twitter has over 270 million active users which produces 500+ million tweets a day, up to 150k+ tweets per second, and more than 100TB+ of compressed data per day.

Architecturally, Twitter is mostly composed of services, mostly written in the open source project Finagle, representing the core nouns of the platform such as the user service, timeline service, and so on. Mesos allows theses services to scale to tens of thousands of bare-metal machines and leverage a shared pool of servers across data centers.

Furthermore and more importantly, Mesos has transformed the way engineers think about launching services at Twitter. Instead of thinking about static machines, engineers think about what resources (e.g., CPU, memory and disk) their service requires and delegates to the Apache Aurora scheduler to efficiently run the service on Mesos. The result has been a reduction in the time between prototyping and launching new services, making it easier to ship projects. On top of that, there are real capex and opex savings since less machines are needed and there is less downtime.

When is the last time you've seen the fail whale on Twitter?

Tell us about MesosCon happening in August 2014. Who should attend and what can they expect?

MesosCon is a conference co-located with LinuxCon and happening on August 21-22, 2014. Anyone interested in learning about the Mesos ecosystem or how companies like Twitter and Google manage their computing infrastructure should attend.

The first day will start off with keynotes from Ben Hindman (Twitter) and John Wilkes (Google) and is dedicated to workshops and talks featuring:

-

Mesos frameworks: including Apache Aurora, Marathon, Spark, and a stream processing framework developed by Netflix.

-

An operations perspective on Mesos: including talks on the challenges of running an elastic cluster, and using Docker with Mesos.

-

Presentations from companies who use Mesos in production: including eBay and Airbnb.

The second day there will be a hackathon to give attendees an opportunity to learn or contribute to the project based on their or community needs. It's really a unique event where you can learn more about Mesos internals and collaborate with others in the ecosystem to improve Mesos.

How does someone learn more about Apache Mesos, get started, and join the community?

To learn more about Mesos, I highly recommend watching Ben Hindmans recent presentation on Mesos.

To get quickly started with Mesos, I like to point people to the Elastic Mesos service which helps you spin up a Mesos cluster quickly on EC2 and helps alleviate the typical setup pains you see with any distributed system.

To get involved with the community, I recommend taking a look at the Mesos community site and starting the #mesos IRC channel on freenode, the user mailing list, and of course tweeting at the @ApacheMesos account.

Comments are closed.