Today we'll discuss how to use the Beautiful Soup library to extract content from an HTML page. After extraction, we'll convert it to a Python list or dictionary using Beautiful Soup.

What is web scraping, and why do I need it?

The simple answer is this: Not every website has an API to fetch content. You might want to get recipes from your favorite cooking website or photos from a travel blog. Without an API, extracting the HTML, or scraping, might be the only way to get that content. I'm going to show you how to do this in Python.

Not all websites take kindly to scraping, and some may prohibit it explicitly. Check with the website owners if they're okay with scraping.

How do I scrape a website in Python?

For web scraping to work in Python, we're going to perform three basic steps:

- Extract the HTML content using the

requestslibrary. - Analyze the HTML structure and identify the tags which have our content.

- Extract the tags using Beautiful Soup and put the data in a Python list.

Installing the libraries

Let's first install the libraries we'll need. The requests library fetches the HTML content from a website. Beautiful Soup parses HTML and converts it to Python objects. To install these for Python 3, run:

pip3 install requests beautifulsoup4Extracting the HTML

For this example, I'll choose to scrape the Technology section of this website. If you go to that page, you'll see a list of articles with title, excerpt, and publishing date. Our goal is to create a list of articles with that information.

The full URL for the Technology page is:

https://notes.ayushsharma.in/technologyWe can get the HTML content from this page using requests:

#!/usr/bin/python3

import requests

url = 'https://notes.ayushsharma.in/technology'

data = requests.get(url)

print(data.text)The variable data will contain the HTML source code of the page.

Extracting content from the HTML

To extract our data from the HTML received in data, we'll need to identify which tags have what we need.

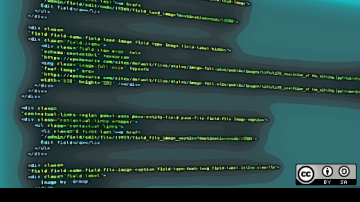

If you skim through the HTML, you’ll find this section near the top:

<div class="col">

<a href="https://opensource.com/2021/08/using-variables-in-jekyll-to-define-custom-content" class="post-card">

<div class="card">

<div class="card-body">

<h5 class="card-title">Using variables in Jekyll to define custom content</h5>

<small class="card-text text-muted">I recently discovered that Jekyll's config.yml can be used to define custom

variables for reusing content. I feel like I've been living under a rock all this time. But to err over and

over again is human.</small>

</div>

<div class="card-footer text-end">

<small class="text-muted">Aug 2021</small>

</div>

</div>

</a>

</div>This is the section that repeats throughout the page for every article. We can see that .card-title has the article title, .card-text has the excerpt, and .card-footer > small has the publishing date.

Let's extract these using Beautiful Soup.

#!/usr/bin/python3

import requests

from bs4 import BeautifulSoup

from pprint import pprint

url = 'https://notes.ayushsharma.in/technology'

data = requests.get(url)

my_data = []

html = BeautifulSoup(data.text, 'html.parser')

articles = html.select('a.post-card')

for article in articles:

title = article.select('.card-title')[0].get_text()

excerpt = article.select('.card-text')[0].get_text()

pub_date = article.select('.card-footer small')[0].get_text()

my_data.append({"title": title, "excerpt": excerpt, "pub_date": pub_date})

pprint(my_data)The above code extracts the articles and puts them in the my_data variable. I'm using pprint to pretty-print the output, but you can skip it in your code. Save the code above in a file called fetch.py, and then run it using:

python3 fetch.pyIf everything went fine, you should see this:

[{'excerpt': "I recently discovered that Jekyll's config.yml can be used to"

"define custom variables for reusing content. I feel like I've"

'been living under a rock all this time. But to err over and over'

'again is human.',

'pub_date': 'Aug 2021',

'title': 'Using variables in Jekyll to define custom content'},

{'excerpt': "In this article, I'll highlight some ideas for Jekyll"

'collections, blog category pages, responsive web-design, and'

'netlify.toml to make static website maintenance a breeze.',

'pub_date': 'Jul 2021',

'title': 'The evolution of ayushsharma.in: Jekyll, Bootstrap, Netlify,'

'static websites, and responsive design.'},

{'excerpt': "These are the top 5 lessons I've learned after 5 years of"

'Terraform-ing.',

'pub_date': 'Jul 2021',

'title': '5 key best practices for sane and usable Terraform setups'},

... (truncated)And that's all it takes! In 22 lines of code, we've built a web scraper in Python. You can find the source code in my example repo.

Conclusion

With the website content in a Python list, we can now do cool stuff with it. We could return it as JSON for another application or convert it to HTML with custom styling. Feel free to copy-paste the above code and experiment with your favorite website.

Have fun, and keep coding.

This article was originally published on the author's personal blog and has been adapted with permission.

Comments are closed.