Mark Collier has been involved with OpenStack since the beginning, first at Rackspace where the project emerged as a joint partnership with NASA, and soon after as a co-founder and now Chief Operating Officer of the OpenStack Foundation.

I had the opportunity to speak with Mark a few weeks ago to hear more about what we can expect as OpenStack continues to evolve: from how it is developed, to what it can do, to how it is used. Here's what he shared with me.

It seems like the conversation around cloud has grown from being primarily around IaaS alone and is now much broader: containers, orchestration and management tools, and a slew of other topics. How is OpenStack and the Foundation changing to address these needs?

Some of the things that are happening at the Summit in Boston are different and tailored to that evolution. We're introducing Open Source Days for the first time. We've done some similar outreach in the past, trying to bring together related open source projects, but this is a bigger focus for us this time. We have dedicated space and time during the event for a lot of these different communities to come together, like Kubernetes and CloudFoundry and so forth. That's a reflection of the fact that people want to bring together multiple technologies. Typically, open source is the dominant method: Anything interesting that's happening these days in cloud, there's an open source project for it.

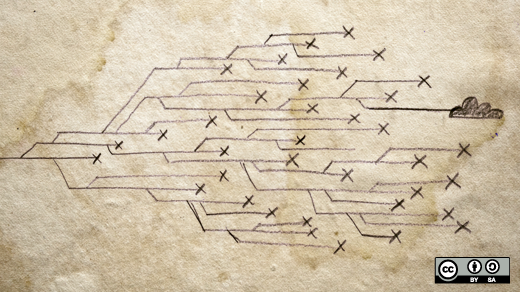

I've talked before about this concept of the "LAMP stack of the cloud." We're starting to see people combining Kubernetes with OpenStack, for example, and there are a lot of other related technologies. I think those two, in particular, are really powerful in combination. People want to see a really simplified view of "is it OpenStack on Kubernetes?" or "is it Kubernetes on OpenStack?" The reality is that in a distributed system, looking at everything as a vertical stack is fairly limiting. It turns out that things sit beside each other, or have a more complex interaction than just the stack. Sometimes that's one of the hardest things to explain: what's sitting on top of what, or how are these different systems talking together.

We're seeing a lot of people combining tools in new and interesting ways, and that will be reflected in the event in terms of explicit time set aside for each of those communities, as well as a lot of conversations where everybody's in the same room. We've found that the majority of people actually running Kubernetes today run it on OpenStack. Those two things are evolving together, and the more we can bring the people that write each of those upstream projects physically in the same room, the better off we'll be to serve the users at the end of the day.

In the early days of OpenStack, we were trying to simplify the message of what it was for. People were trying to get their heads around cloud; seven years ago, cloud was still pretty new, and Infrastructure as a Service was a thing that people were just starting to get their heads around. The reality is, there is no OpenStack cloud that doesn't have some other sets of technology, and we've started to talk about OpenStack as more of an integration engine. You're going to have the hypervisor you choose, whether it's KVM or something else; you're going to have your network provider, or providers; and the same thing for storage.

How will this summit be different now that the project development teams have already met at the PTG in Atlanta earlier this year? How is the role of the summit changing?

The primary reason we went down this path as a community was because at the summits, when we had the design summit held at the same time as the summit overall, the problem that we ran into was that we would have the upstream developers who might fly three thousand miles to a city where we were having this event—hey were literally two rooms away from operators and users whom they would love to talk to, but they can't. They were so busy planning the implementation details for a release that development was just kicking off for at that time. It was kind of ironic that bringing everyone together, they actually weren't talking as much as we would like because there were too many things going on at once. It was too much time pressure, particularly on upstream developers, to be able to get time to participate.

There were a couple of different facets. One was direct user engagement with operators to find out what they like, what they don't like, and how they want the software to evolve. Two was the longer-term strategic discussions. The way the six-month cycle worked, the design summit would happen right as the release was starting to get written. Occasionally there would already be a little bit of code written. There wasn't a lot of time to think longer term. If you think about how we've shifted the model now, the PTG now serves that function. It's a developer-centric event where they talk about how to implement what's going to be in the next release; it's very implementation- and detail-oriented.

On an ongoing basis, we now have a concept within the main summit called the forum. So the forum, instead of being six months before the release, you can think of it as nine months before the release. What you'll see in Boston is that we'll have upstream developers and operators in the same room. All of the content and the discussions they'll be having have been decided by those two communities coming together. They can talk about what do we want OpenStack, nine months or longer from now, to be. There's a little bit more breathing room to think longer-term.

The developers will still be expected and invited to come to the summit, but they'll have a little bit more freedom to attend various types of feedback sessions, and lead sessions, and present in the conference-style sessions that we continue to have. It will really give them the opportunity to stick their heads up a little bit and look at the longer-term horizon, and have discussions with operators and product managers and people who are thinking about OpenStack further out in the future.

Every OpenStack Summit I've attended seems to have a big topic that everybody is talking about. What might we expect the big theme for this summit to be?

Jonathan Bryce will be giving an opening keynote on Monday in Boston, and he's going to be talking about how private clouds powered by OpenStack actually cost less and do more than you might think. In particular, hyper-scale clouds. We're starting to see a number of users who are embracing hybrid or the multi-cloud world with some public cloud and some private cloud. They're getting more sophisticated about what workloads to place where. In many cases, they can get substantial cost savings by moving certain strategic long-running workloads to private cloud.

OpenStack has gone into a lot of interesting use cases that you never would have foreseen before. It's routing phone calls for millions of users on mobile networks, and there are some other interesting use cases emerging around edge computing. I think that will be a really cool theme you'll start to see picking up in the industry. OpenStack is well-positioned for this concept of edge computing, where you have so much data being collected and processed at the edge of these giant networks that it actually makes sense to have compute on the edge as well. We've started to see several examples like that, and we'll hear from a few, including Verizon.

On the second day, I'll be giving the opening keynote on Tuesday. The theme that I'm going to be talking about is composable open infrastructure, thinking about all of those different combinations of OpenStack services with non-OpenStack open source projects to do new and interesting things. One of the emerging trends we see is people picking a specific OpenStack service to meet their needs and not necessarily deploying the whole suite of OpenStack. For example, if they want block storage, they can just deploy Cinder, and that might be a back end for a Kubernetes-orchestrated part of their infrastructure. Or they might just want to take advantage of Ironic to manage their bare metal or Neutron to manage their network, but they don't necessarily want the whole suite of OpenStack services.

There are going to be a lot of demos on day two. We're looking to do both an Ironic demo and a Cinder demo, as well as a number that tie into Kubernetes, pushing the envelope there in ways we haven't done before.

What are some of the big things you're hoping we'll see in the next release and beyond?

One of the areas that's getting a lot of attention is around zero-downtime upgrades. Upgrades are a thing that people have said is a pain point for years, and we've chipped away at it to the point where now most of the services can be upgraded without any interruption to the workloads. As we get into more sophisticated methodologies for live upgrades, we can start to do zero-downtime upgrades where, for example, the API service never goes down even for a second. There are some interesting architectural and implementation details behind that which are in progress on a few projects. That concept of upgrades being as painless as possible really pays benefits for many years. One of the biggest difficulties users have is keeping pace with the OpenStack release schedule and release pace and innovation. But once you get on a smooth upgrade path, every future release becomes available to you without as much pain.

One aspect of upgrades is around containers. So you see more and more of the implementations of how you deploy and manage OpenStack putting the OpenStack services inside containers. You have the Kolla project, and even before that there were a number of distributions that had their own kind of approach to containerizing OpenStack. Those are all delivering benefits around manageability.

In the Ocata release, projects involving containers—Kolla and Kuryr, for example—were the fastest-growing areas of development. Kuryr is a bridge between the native container networking technologies and Neutron. We talk about OpenStack as one platform of bare metal, virtual machines, and containers, and the real magic there is the network. If you are going to have a sophisticated workload where some of the processes are on bare metal for different reasons -- performance, security, isolation, etc. -- some of them are running on VMs, and containers are mixed in there as well, the real magic is when you can run the on a common network. Kuryr plays an important role there, and I think we'll see a lot of additional sophistication in what Kuryr can do. We're going to be doing a demo on day two, showing a big data workload with Spark and some other big data services in an OpenStack environment that combines bare metal, virtual machines, and containers.

How has the open source community behind OpenStack grown and changed over time? Have there been any big surprises in the way you have seen the community progress?

It is crazy to watch and think back on how it has grown. The people who got involved in the early days were there because they believed in the idea. We had, at best, kind of a loose prototype of Nova, and we had some Swift code that worked pretty well, but in terms of where the software is today, there wasn't a lot there seven years ago. It was really about people who believed in this idea that all of these different companies could bring some resources together and help create a standard and open infrastructure alternative. People got excited by the idea of it, and being a part of something that could make an impact.

It took some time for that to bear fruit and start to see some of the big users. If you look back three or four years ago, we had the Walmarts of the world, and eBay running OpenStack, and that was exciting, but there was this feeling that you needed to be an eBay or a Walmart to have the chops to do this. What we've really seen in the past year or so is that the software has gotten a lot better. It's really because of the fact that users are a bigger part of the contributor base, and a bigger part of feeding requirements in. It's less of an academic exercise of what do we think is the right solution to what operators want, now operators are running it and telling us what they want to happen differently. Operators are in those discussions, and we're starting to see more of the sponsors of the foundation and the summit, and the contributors to development efforts, coming from users. That's an interesting shift.

We still certainly have a very vibrant ecosystem of large companies and startups that are investing in OpenStack and writing a lot of the code. It's become very diverse. Just like in investing, where they'll tell you to have a diverse portfolio, if you look at OpenStack, probably more so than any other open source project out there, it's very resilient to shocks to the system. If one company decides they're no longer going to employ developers, there are so many other companies employing developers (I think we had 3,500 developers just last year contribute to OpenStack) that it's very resilient to those kinds of changes.

That's one of the payoffs for making sure that every project in OpenStack has multiple affiliated companies that are behind it. It's a point of pride for us, and the Technical Committee makes this an explicit part of the criteria. That's one thing we've seen, that the commitment to not having one company dominate the project is healthy, and we see users saying that's what they like about it.

Every user we talk to, they do work with companies in the ecosystem. They're saying "we definitely want that." They like choices, and they like the fact that if they decide to move between vendors, that it's still the same basic code base that's been contributed to by this wide diversity of people from all over the world and from many different companies. That's been exciting to see, how the community has been resilient to the inevitable change and consolidation that happens in any industry over time.

If you were trying to get a young developer fresh out of school today interested in OpenStack, what would you tell them? Why is OpenStack still an exciting area of technology to be working in?

For me, I always try to take a step back and take a look at the macro picture of what's driving anything that's going on in technology or the market as a whole. What's exciting about it is that the sheer demand for infrastructure is growing at an unbelievable rate. And so, with the proliferation of inexpensive sensors allowing us to capture more data than ever, the interesting problem of how you actually process, store, and move data as the amount grows is really in its infancy.

I think edge computing is probably one of the more interesting things that's going to emerge out of that. For example, we heard from some researchers from Cambridge about the Square Kilometer Array at the last summit. This is a system that's going to generate unbelievable, massive amounts of data every day. So much data that literally enough hard drives in the world don't exist to store the amount of data they're going to capture every day. They have to, through algorithms and edge computing, filter out the signal from the noise, and sometimes they have to throw away some of the signal because there just isn't enough raw storage capacity, and physics prevents you from moving that all up to some centralized cloud.

So I think that you'll see the pendulum swing again in the way that architectures will evolve because of this massive need of fifty million servers that need to be managed. There's no way to do that in a way that's manual. It's got to be highly automated. We're entering a phase when it's going to get more exciting, because of this balance of physics and economics that so much of the data just has to live at the edge, it can't physically move to the center. I think you'll see that change in architectures and the way people think and operate systems, and that's an exciting time to be in infrastructure, whenever there's a pendulum that starts to swing back like that.

OpenStack Summit kicks off next week in Boston, and Opensource.com will be there to cover all of the exciting news and events. Be sure to check out hashtags #OpenStack and #OpenStackSummit on Twitter to follow what's happening in real time.

Comments are closed.