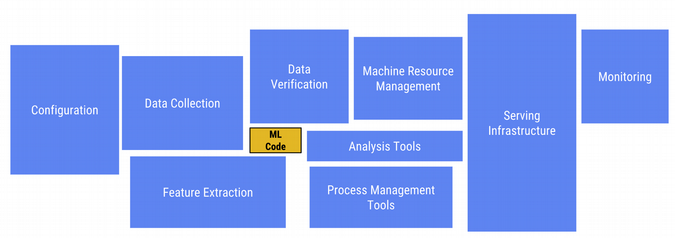

Model construction and training are just a small part of supporting machine learning (ML) workflows. Other things you need to address include porting your data to an accessible format and location; data cleaning and feature engineering; analyzing your trained models; managing model versioning; scalably serving your trained models; and avoiding training/serving skew. This is particularly the case when the workflows need to be portable and consistently repeatable and have many moving parts that need to be integrated.

Further, most of these activities reoccur across multiple workflows, perhaps just with different parameterizations. Often you're running a set of experiments that need to be performed in an auditable and repeatable manner. Sometimes part or all of an ML workflow needs to run on-premises, but in other contexts it may be more productive to use managed cloud services, which make it easy to distribute and scale out the workflow steps and run multiple experiments in parallel. They also tend to be more economical for "bursty" workloads.

Model training is just a small part of a typical ML workflow. (From Hidden Technical Debt in Machine Learning Systems.)

Building any production-ready ML system involves various components, often mixing vendors and hand-rolled solutions. Connecting and managing these services for even moderately sophisticated setups can introduce barriers of complexity. Often, deployments are so tied to the clusters they have been deployed to that these stacks are immobile, meaning that moving a model from a laptop to a highly scalable cloud cluster, or from an experimentation environment to a production-ready context, is effectively impossible without significant re-architecture. All these differences add up to wasted effort and create opportunities to introduce bugs at each transition.

Kubeflow helps address these concerns. It is an open source Kubernetes-native platform for developing, orchestrating, deploying, and running scalable and portable ML workloads. It helps support reproducibility and collaboration in ML workflow lifecycles, allowing you to manage end-to-end orchestration of ML pipelines, to run your workflow in multiple or hybrid environments (such as swapping between on-premises and cloud building blocks, depending upon context), and to help you reuse building blocks across different workflows. Kubeflow also provides support for visualization and collaboration in your ML workflow.

Kubeflow Pipelines is a newly added component of Kubeflow that can help you compose, deploy, and manage end-to-end, optionally hybrid, ML workflows. Because Pipelines is part of Kubeflow, there's no lock-in as you transition from prototyping to production. Kubeflow Pipelines supports rapid and reliable experimentation, with an interface that makes it easy to develop in a Jupyter notebook, so you can try many ML techniques to identify what works best for your application.

Leveraging Kubernetes

There are many advantages to building an ML stack on top of Kubernetes. There is no need to re-implement the fundamentals that would be required for such a cluster, such as support for replication, health checks, and monitoring. Kubernetes supports the ability to generate robust declarative specifications and build custom resource definitions (CRDs) tailored for ML. For example, Kubeflow's TFJob is a custom resource that makes it easy to run distributed TensorFlow training jobs on Kubernetes.

Kubernetes gives the scalability, portability, and reproducibility required for supporting consistently run ML flows and experiments. It helps build and compose reusable workflow steps and ML building blocks and makes it easy to run the same workflows on different clusters as they transition from prototype to production or move from an on-prem cluster to the cloud.

Kubeflow at version 0.3

Kubeflow has reached version 0.3. Following are some of its notable features:

- Support for multiple machine learning frameworks:

- Support for distributed TensorFlow training via the TFJob CRD.

- Ability to serve trained TensorFlow models using the TF Serving component.

- Ability to serve models from a range of ML frameworks using Seldon.

- A v1alpha2 API for PyTorch from Cisco that brings parity and consistency with the TFJob operator.

- A growing set of examples, including one for XGBoost.

-

In collaboration with NVIDIA, support for the NVIDIA TensorRT Inference Server, which supports the top AI frameworks.

-

Apache Beam-based batch inference, including support for GPUs. Apache Beam makes it easy to write batch and streaming data processing jobs that run on a variety of execution engines.

-

A JupyterHub installation with many commonly required libraries and widgets included in the notebook installation.

-

Support for hyperparameter tuning via Katib (based on Google's Vizier) and a new Kubernetes custom controller.

-

Declarative and extensible deployment: A new command-line deployment utility, kfctl.sh, as well as a web-based launcher (in alpha, currently for deploying on Google Cloud Platform).

-

Kubebench, a framework from Cisco for benchmarking ML workloads on Kubeflow.

-

Kubeflow Pipelines, discussed in more detail below.

TFX support

TensorFlow Extended (TFX) is a TensorFlow-based platform for performant machine learning in production, first designed for use within Google, but now mostly open source. You can find an overview in "TFX: A TensorFlow-Based Production-Scale Machine Learning Platform." Several Kubeflow Pipelines examples contain TFX components as building blocks, including use of TensorFlow Transform, TensorFlow Model Analysis, TF Data-Validation, and TensorFlow Serving. The TFX libraries also come bundled with Kubeflow's JupyterHub installation.

Building and running Kubeflow Pipelines

Kubeflow Pipelines adds support to Kubeflow for building and managing ML workflows. An SDK makes it easy to define a pipeline via Python code and to build pipelines from a Jupyter notebook.

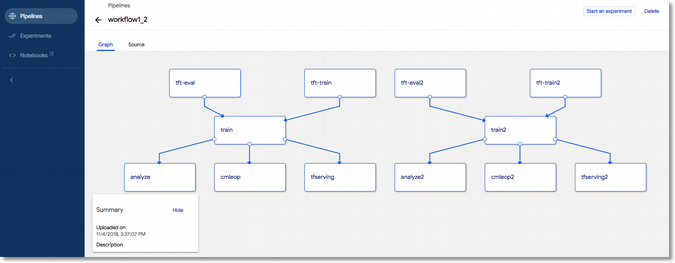

Once you've specified a pipeline, you can use it as the basis for multiple experiments, each of which can have multiple, potentially scheduled runs. You can take advantage of already-defined pipeline steps (components) and pipelines or build your own.

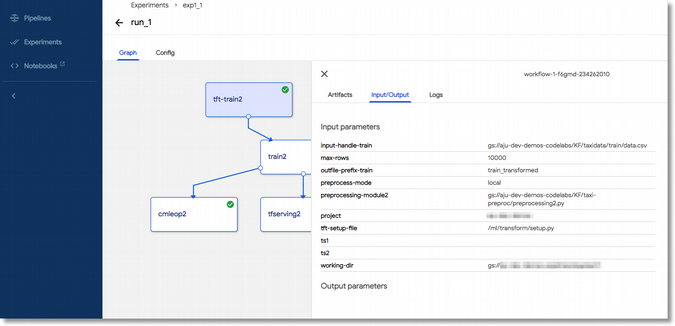

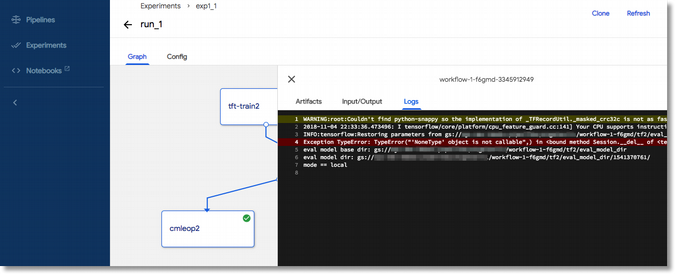

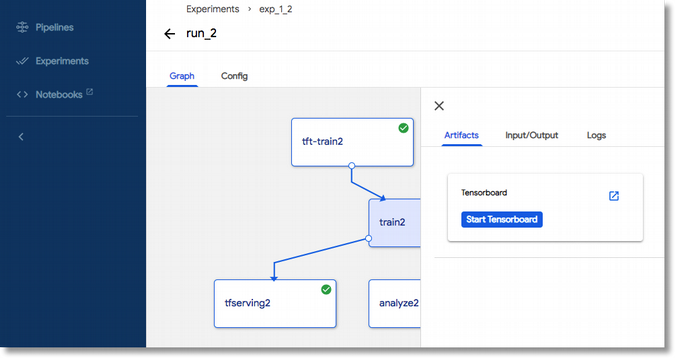

The Pipelines UI, accessed from the Kubeflow dashboard, lets you easily specify, deploy, and monitor your pipelines and experiments and inspect the output of runs.

A pipeline graph.

Inspecting the configuration of a pipeline step.

Inspecting the logs for a pipeline step.

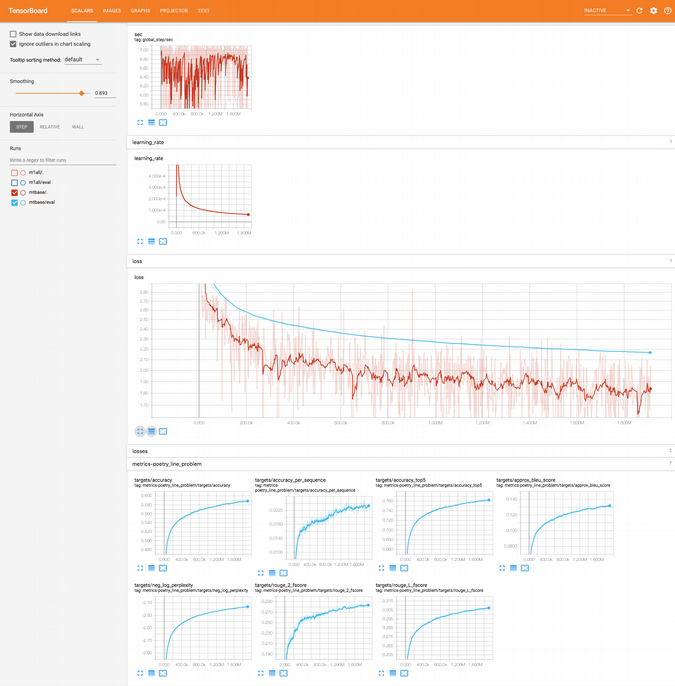

The pipelines UI has integrated support for launching TensorBoard to monitor the output of a given step.

An example Tensorboard dashboard.

Building and using pipelines from a Jupyter notebook

The Pipelines SDK includes support for constructing and running pipelines from a Jupyter notebook—including the ability to build and compile a pipeline directly from Python code that specifies its functionality, without leaving the notebook. See this example notebook for more detail.

What's next

Kubeflow's next major release, 0.4, is coming by the end of 2018. Here are some key areas we are working on:

-

Ease of use: To make Kubeflow more accessible to data scientists, we want to make it possible to perform common ML tasks without having to learn Kubernetes. In 0.4, we plan to provide more tooling to make it easy for data scientists to train and deploy models entirely from a notebook.

-

Model tracking: Keeping track of experiments is a major source of toil for data scientists and ML engineers. In 0.4, we plan on making it easier to track models by providing a simple API and database for tracking models.

-

Production readiness: With 0.4, we'll continue to push Kubeflow towards production readiness by graduating our PyTorch and TFJob operators to beta.

Learn more

Here are some resources for learning more and getting help:

- Kubeflow's Getting Started guide and documentation

- Kubeflow examples

- Kubeflow's Slack channel

- The Kubeflow-discuss email list

- Kubeflow's Twitter account

- Kubeflow's weekly community meeting (please join us!)

- Getting started with Kubeflow Pipelines with accompanying examples

- How to create and deploy a Kubeflow Machine Learning Pipeline

- More Kubeflow Pipelines examples

- Kubeflow Pipelines documentation

If you're attending KubeCon Seattle in December, don't miss these sessions:

- Workshop: Kubeflow End-to-End: GitHub Issue Summarization by Amy Unruh and Michelle Casbon

- Natural Language Code Search for GitHub Using Kubeflow by Jeremy Lewi and Hamel Husain

- Eco-Friendly ML: How the Kubeflow Ecosystem Bootstrapped Itself by Peter McKinnon

- Deep Dive: Kubeflow BoF by Jeremy Lewi and David Aronchick

- Machine Learning as Code by Jay Smith

Amy Unruh and Michelle Casbon will present Kubeflow End-to-End: GitHub Issue Summarization at KubeCon + CloudNativeCon North America, December 10-13 in Seattle.

3 Comments