Cloud-native programming inherently involves working with remote endpoints: microservices, serverless, APIs, WebSockets, software-as-a-service (SaaS) apps, and more. Ballerina is a cloud-native, general purpose, concurrent, transactional, and statically- and strongly-typed programming language with both textual and graphical syntaxes.

Its specialization is integration; it brings fundamental concepts, ideas, and tools of distributed system integration into the language and offers a type-safe, concurrent environment to implement such applications. These include distributed transactions, resiliency, concurrency, security, and container-management platforms.

Ballerina has been inspired by Java, Go, C, C++, Rust, Haskell, Kotlin, Dart, TypeScript, JavaScript, Swift, and other languages. It is an open source project, distributed under the Apache 2.0 license, and you can find its source code in the project's GitHub repository.

Textual and graphical syntaxes

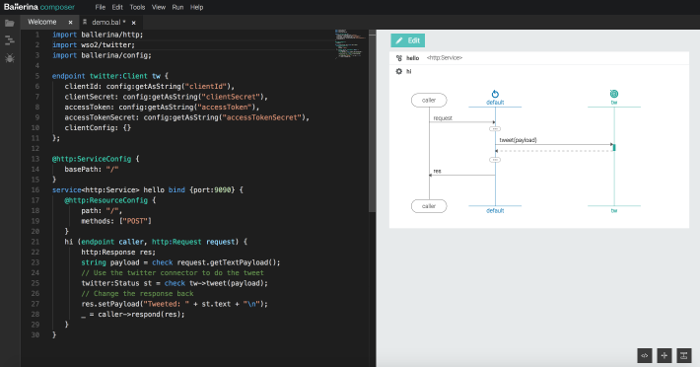

Ballerina's programming language semantics are created to be natural for developers to express the structure and the logic of a program. To describe complex interactions between multiple parties, we typically use a sequence diagram. This approach enables visualization of endpoints and actions, such as asynchronous and synchronous message passing and parallel executions, in an intuitive manner.

Textual and graphical representation of the Ballerina code.

Built-in resiliency

Resilient and type-safe integration is built into the language. When you try to invoke an external endpoint that might be unreliable, you can circumvent that interaction with resilience capabilities, such as circuit breakers, failover, and retry, for your specific protocol.

Circuit breaker

Adding a circuit breaker is as trivial as passing a few additional parameters to your client endpoint code.

endpoint http:Client backendClientEP {

url: "https://localhost:8080",

// Circuit breaker configuration options

circuitBreaker: {

// Failure calculation window.

rollingWindow: {

// Time period in milliseconds for which the failure threshold

// is calculated.

timeWindowMillis: 10000,

// The granularity at which the time window slides.

// This is measured in milliseconds.

bucketSizeMillis: 2000

},

// The threshold for request failures.

// When this threshold exceeds, the circuit trips.

// This is the ratio between failures and total requests.

failureThreshold: 0.2,

// The time period(in milliseconds) to wait before

// attempting to make another request to the upstream service.

resetTimeMillis: 10000,

// HTTP response status codes which are considered as failures

statusCodes: [400, 404, 500]

},

timeoutMillis: 2000

};Failover

You can define client endpoints that need to failover with timeout intervals and failover codes.

// Define the failover client endpoint to call the backend services.

endpoint http:FailoverClient foBackendEP {

timeoutMillis: 5000,

failoverCodes: [501, 502, 503],

intervalMillis: 5000,

// Define set of HTTP Clients that needs to be Failover.

targets: [

{ url: "https://localhost:3000/mock1" },

{ url: "https://localhost:8080/echo" },

{ url: "https://localhost:8080/mock" }

]

};Retry

You can define an endpoint retry configuration with retry intervals, retry count, and backoff factors with your endpoints.

endpoint http:Client backendClientEP {

url: "https://localhost:8080",

// Retry configuration options.

retryConfig: {

interval: 3000,

count: 3,

backOffFactor: 0.5

},

timeoutMillis: 2000

};Asynchronous and parallel execution

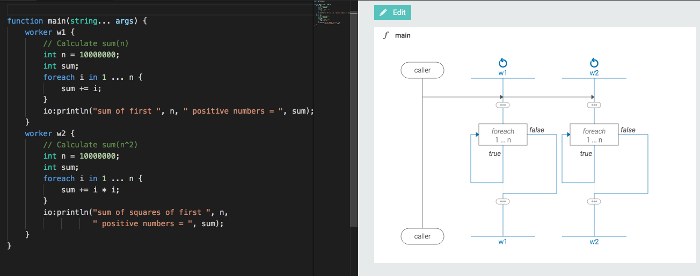

Ballerina's execution model is composed of parallel execution units known as workers. A worker represents Ballerina's basic execution construct. In Ballerina, each function consists of one or more workers, which are independent parallel execution code blocks. If explicit workers are not mentioned with worker blocks, the function code will belong to a single, implicit default worker.

Parallel execution code in textual and graphical representation.

Ballerina also offers native support for fork-join, which is a special case of worker interaction. With fork-join, you can fork the logic and offload the execution to multiple workers and conditionally join the result of all workers inside the join clause.

fork {

worker w1 {

int i = 23;

string s = "Foo";

io:println("[w1] i: ", i, " s: ", s);

(i, s) -> fork;

}

worker w2 {

float f = 10.344;

io:println("[w2] f: ", f);

f -> fork;

}

} join (all) (map results) {

int iW1;

}Ballerina also supports asynchronous invocation of functions or endpoints. Although most of the synchronous invocations' external endpoints are implemented in the fully non-blocking manner in Ballerina, there are certain situations where you have to invoke an endpoint or function asynchronously and later check for the result.

future<http:Response | error> f1

= start nasdaqServiceEP

-> get("/nasdaq/quote/GOOG");

io:println(" >> Invocation completed!"

+ " Proceed without blocking for a response.");

// ‘await` blocks until the previously started async

// function returns.

var response = await f1;Transaction handling

Ballerina has language-level constructs in handling transactions, where you can do local transactions with connectors, and distributed transactions with X/A-compatible connectors, or even service-level transactions with the built-in coordination support available in the language runtime.

In Ballerina, doing a set of actions transactionally is just a matter of wrapping all the operations in a "transaction" block.

transaction {

_ = testDB->update("INSERT INTO CUSTOMER(ID,NAME) VALUES (1, 'Anne')");

_ = testDB->update("INSERT INTO SALARY (ID, MON_SALARY) VALUES (1, 2500)");

}Secure by design

Ballerina is designed to ensure that programs written with Ballerina are inherently secure. Ballerina programs are resilient to major security vulnerabilities, including SQL injection, path manipulation, file manipulation, unauthorized file access, and unvalidated redirect (open redirect). This is achieved with a taint analysis mechanism, in which the Ballerina compiler identifies untrusted (tainted) data by observing how tainted data propagates through the program. If untrusted data is passed to a security-sensitive parameter, a compiler error is generated.

The @sensitive annotation can be used with parameters of user-defined functions. This allows users to restrict passing tainted data into a security-sensitive parameter.

function userDefinedSecureOperation(@sensitive string secureParameter) {

}For example, Ballerina's taint-checking mechanism completely prevents SQL injection vulnerabilities by disallowing tainted data in the SQL query. The following results in a compiler error because the query is appended with a user-provided argument.

function main(string... args) {

table dataTable = check customerDBEP->

select("SELECT firstname FROM student WHERE registration_id = " +

args[0], null);The following results in a compiler error because a user-provided argument is passed to a sensitive parameter.

userDefinedSecureOperation(args[0]);After performing necessary validations and/or escaping, the untaint unary expression can be used to mark the proceeding value as trusted and pass it to a sensitive parameter.

userDefinedSecureOperation(untaint args[0]);Native support for Docker and Kubernetes

Ballerina understands the architecture around it; the compiler is environment-aware with microservices directly deployable into infrastructure like Docker and Kubernetes by autogenerating Docker images and YAMLs. To explain, let's look at sample hello_world.bal code.

import ballerina/http;

import ballerinax/kubernetes;

@kubernetes:Service {

serviceType: "NodePort",

name: "hello-world"

}

endpoint http:Listener listener {

port: 9090

};

@kubernetes:Deployment {

image: "lakwarus/helloworld",

name: "hello-world"

}

@http:ServiceConfig {

basePath:"/"

}

service<http:Service> helloWorld bind listener {

@http:ResourceConfig {

path: "/"

}

sayHello(endpoint outboundEP, http:Request request) {

http:Response response = new;

response.setTextPayload("Hello World! \n");

_ = outboundEP->respond(response);

}

}@kubernetes:Service annotation defines how you can expose your service via Kubernetes services. @kubernetes:Deployment creates a corresponding Docker image by bundling the application code and generates Kubernetes deployment YAML with the define-deployment configuration.

Compiling the hello_world.bal file will generate all Kubernetes deployment artifacts, Dockerfiles, and Docker images.

$> ballerina build hello_world.bal

@kubernetes:Docker - complete 3/3

@kubernetes:Deployment - complete 1/1

@kubernetes:Service - complete 1/1

Run following command to deploy kubernetes artifacts:

kubectl apply -f ./kubernetes/

$> tree

.

├── hello_world.bal

├── hello_world.balx

└── kubernetes

├── docker

│ └── Dockerfile

├── hello_world_svc.yaml

└── hello_world_deployment.yamlkubectl apply -f ./kubernetes/ will deploy the app into Kubernetes and can be accessed via Kubernetes NodePort.

$> kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

hello-world NodePort 10.96.118.214 <none> 9090:32045/TCP 1m

$> curl https://localhost:<32045>/

Hello, World!Ballerina Central

Ballerina fosters reuse and sharing of its packages via its global central repository, Ballerina Central. There you can share endpoint connectors, custom annotations, and code functions as shareable packages by using push-and-pull versioned packages.

Learn more

With the emergence of microservice architectures, the software industry is moving towards cloud-native application development. Cloud-native programming languages, such as Ballerina, will be an essential element of fast innovation.

Resources for learning more about Ballerina are available on the Learn Ballerina section of the project's website. Also, consider attending Ballerinacon, July 18, 2018, in San Francisco and streamed globally. This full-day event will offer intense training on the best practices of microservice development, resiliency, integration, Docker and Kubernetes deployment, service meshes, serverless, test-driven microservice development, lifecycle management, observability, and security. OpenSource.com readers can attend for free by using coupon code BalCon-OpenSource when ordering tickets.

1 Comment