So far, ownCloud users have only had the opportunity to choose between simple local file storage based on a POSIX-compatible file system or EOS Open Storage (EOS). The latter causes massive complexity during setup. More recent versions of ownCloud feature a functionality called Decomposed FS. This file system is supposed to bring oCIS to arbitrary storage backends and even scalable ones.

During the rewrite of ownCloud from PHP to Go, the company behind the private cloud solution is introducing massive changes to the backend of the software. This time, the developers do away with a tedious requirement of the first versions of ownCloud Infinite Scale (oCIS). By implementing a file system abstraction layer, oCIS can use arbitrary backend-storages (and even such storage mechanisms that can seamlessly scale out). This article explains the reasons behind ownCloud's decision, the details behind the Decomposed FS component, and what it means for ownCloud users and future deployments.

To understand the struggle that oCIS faces, it's important to have a quick look back at the genesis of ownCloud Infinite Scale. What started as an attempt to gain more performance resulted in a complete rewrite of the tool in a programming language that was different from the previous one. ownCloud did, however, partner with several companies and institutions when rewriting its core product. Amongst the organizations that ownCloud partnered with was a university in Australia. Said university used EOS, a storage technology developed by CERN, the European physics research institute notorious for running projects like the Large Hadron Collider (LHC). For the partnership between ownCloud and the Australian University to make any sense, oCIS needed to be tied to the existing on-premises storage solution of the institute.

File storage at CERN is vastly different from what the average user usually uses. While not the only experiment at CERN, the LHC certainly belongs to the experiments generating the largest amounts of data. Although CERN uses technology such as OpenStack to crunch data resulting from an active LHC, terabytes of material for later use still need to be saved somewhere daily. Accordingly, one of CERN's biggest factors regarding their own storage solution is seamless scalability. The institute's answer to that demand is a storage solution called "EOS Open Storage" (EOS). At the time of writing this article, EOS at CERN had a capacity of over 500 petabytes, making it one of the most extensive storage facilities in use at experimental institutes in the whole world.

EOS is not a file system or even anything close to it. Instead, it's a combination of numerous services with various interfaces. It implicitly takes care of redundancy, and its layout may remind some readers of solutions such as LustreFS, with central services for file metadata and file placement. EOS was obviously designed with massive amounts of data in mind. It came with the ability to scale out to dimensions that average admins will hardly ever encounter.

EOS doesn't work for end-users

The immense scale is precisely why the close ties between oCIS and EOS soon became a challenge for the vendor and its software. The question arose, Was it also possible to run oCIS with a simple driver for local storage, just like ownCloud 10? That basic driver did not deliver all the features that ownCloud wanted to implement. On the other hand, even the most optimistic oCIS developers knew right from the start that average end users would definitely not run their own EOS instance. This was true for several reasons. First, the required hardware doesn't make sense for admins and will likely exceed budgets by several orders of magnitude. Furthermore, running a storage solution such as EOS solely for storing pictures of Aunt Sally's birthday party doesn't make much sense. In fact, EOS's complexity would soon scare most users away from oCIS. To make oCIS a success even for the average users, ownCloud had to devise a plan adapted to the storage facilities usually found in smaller environments. And that is where the Decomposed FS comes into play.

The challenges of storing files

To understand what the Decomposed FS actually is, it is necessary to remember how typical POSIX file systems work and why that might be a challenge for solutions such as oCIS. A file system is a data structure allowing the use of arbitrary storage devices. Usually, devices based on storage blocks (block devices) are used for this task. Without a file system, it would be possible to write files to a device but not to read the files in a targeted manner at a later point in time. Instead, the entire content of the storage device would need to be walked through until the specific information is found, which would, of course, be highly ineffective and very slow.

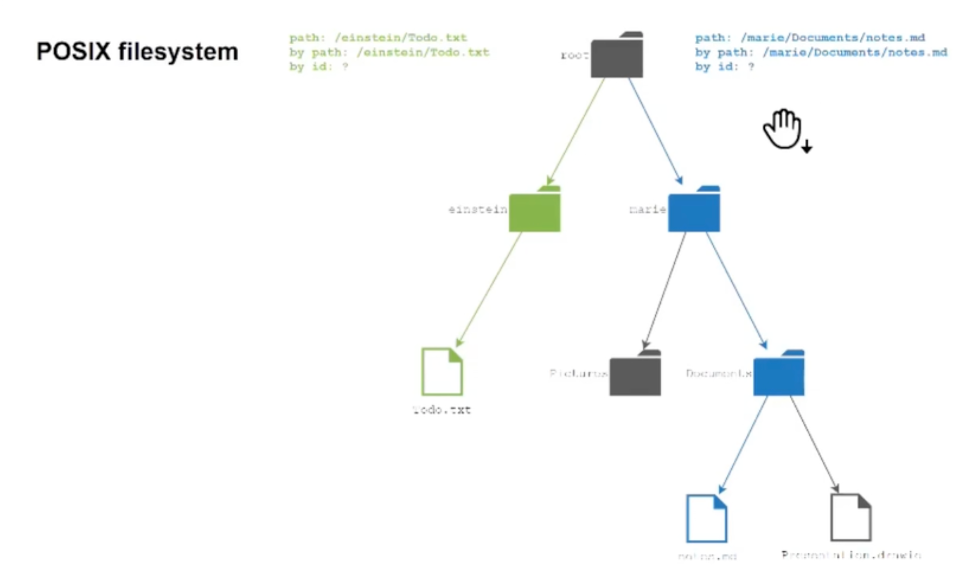

UNIX- and UNIX-like file systems nowadays mostly use file systems meeting the requirements of the POSIX standard for file systems (Figure 1). Hence they use a hierarchy-based structure, which is why you will often read about "file system trees." Accordingly, there will usually be a root directory (/) and subdirectories containing the actual files with their payload. Directories are, strictly speaking, handled as a special kind of file internally by the file system. File access is path-based. Users will implicitly or explicitly specify a full path to a file they want to open, and the respective kernel's file system driver reads the file from the storage device and presents it to the user. This process itself is already a complicated mechanism.

Figure 1: POSIX-compatible filesystems allow users to access files based on their path only. This is slow and keeps several relevant features from being implemented properly (Markus Feilner and Martin Loschwitz, CC BY-SA 4.0).

Matters get worse, though. A path is not the only property of a file in modern operating systems. Consider file permissions, for instance. Limiting access to specific files to a certain group of people is desirable. Imagine a mail server where users only get access to their mailbox after successfully logging in with a combination of a username and password. Admins definitely do not want user A to be able to open user's B mailbox. To accommodate this need, file systems implement a permission scheme. For every arbitrary file in a file system, information on who is allowed to access it must be stored somewhere. This is where inodes come into play in POSIX-based file systems. Inodes store the so-called file metadata and have an ID associated by the file system with the actual path to the file on the storage device. Inodes and the associated files can not be accessed by the inode-id by the user. Also, inodes use recyclable IDs, which means a certain inode ID may contain the metadata of another file as compared to the situation a week ago.

"Just use this!" is not enough for oCIS

The bad news is still not over. As the above text pointed out, files on a POSIX file system cannot be accessed by a static ID at all. The traditional POSIX scheme allows for path-based access only. Of course, the kernel driver for a specific file system will internally perform the inode lookup based on the inode's ID–but that ID is not unique and intransparent to the user.

What sounds like a technical nuance is a major deal breaker for solutions such as ownCloud Infinite Scale. For it, the traditional UNIX way of handling files doesn't quite cut it for many reasons. Of course, it would be tempting to program oCIS in a way that solely relies on the underlying file system's file handling. That would make it impossible to use different drivers for different storage backends. Instead, the general assumption would need to be the availability of POSIX schematics in the storage backend behaving identically. Solutions like EOS would already be out of the game, as would solutions based on Amazon's S3 or aspiring technologies such as Ceph and its backing storage, the RADOS object store. Those interested in upstream details about the oCIS and S3NG oCIS storage should look here.

Users want features that POSIX doesn't provide

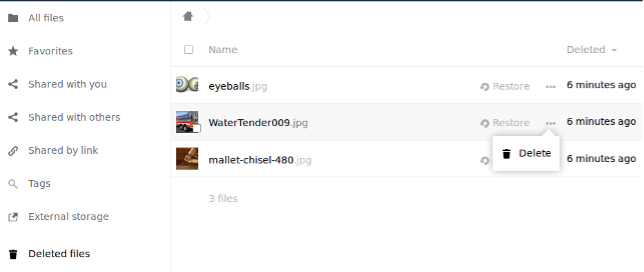

What users generally expect nowadays from solutions such as ownCloud far exceeds the abilities of the POSIX standard. Take, for instance, a function as simple as the trash bin. Users generally want a storage solution to provide such a trash bin as a place to store files temporarily before deleting them permanently. Mistakes happen, and users are often glad because they could collect files from the bin they had already considered lost. POSIX simply does not offer a trash bin-like feature. Implementing a trash bin in a typical POSIX file system would mean iterating through the whole tree and moving the files within the file system. That would be highly inefficient, consume loads of resources, and take quite a while to complete if, for instance, a user moved a directory with thousands of files to the bin.

If in place, kernel caching helps manage performance issues associated with such operations. It's a poison in disguise, though. Relying on it adds another constraint on the file system driver on the storage device used by oCIS.

From ownCloud's point of view, moving the files around on the file system wouldn't even make sense. After all, ownCloud would only need to know that certain files are marked as "trash," and it wouldn't really matter where the actual data resides on the physical storage device.

Metadata: "Here be dragons" from an oCIS point of view

Again, directly editing metadata in inodes by an inode-ID is out of the question as inode-IDs are not unique for the recently mentioned reasons. This is problematic from another perspective: Attaching additional metadata efficiently for solutions such as ownCloud is challenging. Modern file systems support user-extended attributes, an arbitrary set of properties to be defined in the user landscape of a system. Because there is no secure way to reliably access the metadata of any given file in the tree without traversing it, the theoretically available features remain mostly unused.

Long story short: Traditional POSIX semantics comes with some problems for solutions like oCIS. The two major issues are the inability to access files with a unique ID and the inability to access file metadata without traversing the whole file system tree.

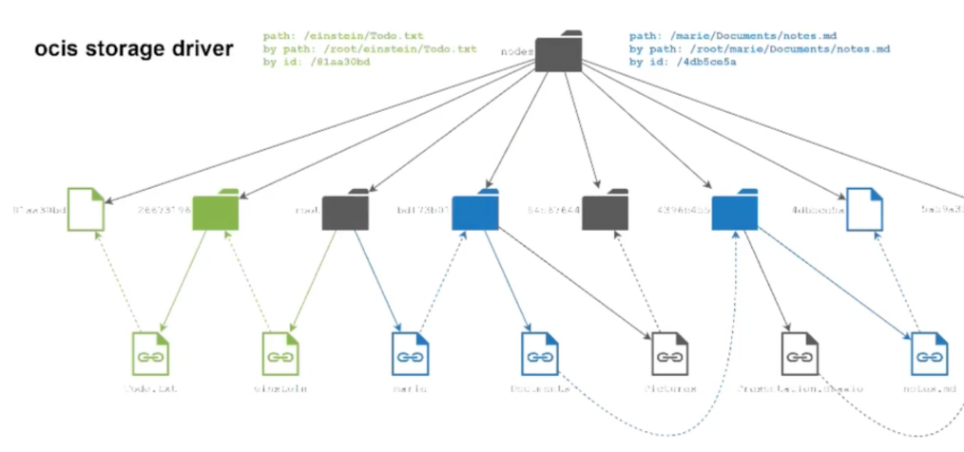

What the oCIS developers call Decomposed FS nowadays is their attempt to decouple ownCloud's own file handling from the handling of files on the underlying storage device. Decomposed FS got its name because the feature partially implements certain aspects of a POSIX file system on top of arbitrary storage facilities. In stark and direct contrast to existing solutions, everything in Decomposed FS is centered around the idea of file access based on unique IDs–and more precisely, UUIDs (Figure 2).

Figure 2: The first incarnation of Decomposed FS uses unique IDs (UUIDs) to name files. A structure of symbolical links is additionally established to allow access by traversing a tree of files (Markus Feilner and Martin Loschwitz, CC BY-SA 4.0)

How does that work in practice?

Like normal POSIX file systems, Decomposed FS uses a hierarchy model to handle its files. A root directory even serves as an entry to the complete set of files stored in ownCloud. oCIS will create individual files with unique IDs (UUIDs) as names on the file system layer below that root directory. Within those files, the actual payload (blob) of the files will reside.

Now obviously, ownCloud will still need a way to organize its files in directories from a user's point of view. Users will still assume they can implement a hierarchical structure for their own files ("Pictures / Holidays / Bahamas 2021 / ...") independently of how the software organizes its files internally. To imitate such a structure, ownCloud developers resort to symbolic links. They create a separate tree with the "nodes " that represent the files and folders of the user-facing tree. The file metadata is stored in the extended attributes of that node. This ensures that accessing files by simply traversing over them (more precisely, the tree of symbolic links) remains possible, along with the ability to access individual files based on their unique ID directly. Another advantage is that iterating over the tree just needs to load the nodes which contain no file contents. The file contents are only loaded when the user downloads the file. Even moving files within the tree doesn't touch the file contents anymore; it just changes the nodes tree.

Figure 3: Functions like a trash bin are not provided by the underlying file system, they need to be provided by software and Decomposed FS makes that considerably easier and less resource intensive (Markus Feilner and Martin Loschwitz, CC BY-SA 4.0).

This mode of operation brings massive advantages that become obvious quickly when looking at the earlier example of moving many files to the trash bin (Figure 3). Rather than shuffling the actual files around on the backend storage, when Decomposed FS moves files to the trash, it makes oCIS mark the inodes as "trashed" and not the actual files.

Here is a basic technical explanation. In the Decomposed FS, we only create, create-symlink, and move, which are atomic operations on POSIX. In addition, we implement locking when changing file attributes to avoid conflicts.

One factor the oCIS developers considered during the creation of Decomposed FS was to be as agnostic of the underlying storage device as possible. At this time, any given POSIX file system will do. The users' home directories are not touched. All blobs and nodes are stored inside the ocis data directory.

That said, it is safe to assume that oCIS will reliably work atop almost every file system currently supported by Linux or Windows. It will most definitely work with the wide range of file systems used as the standard ones for these operating systems.

The road ahead

oCIS people explicitly refer to Decomposed FS as a "testbed for future development." Against all odds, the oCIS file system driver for ownCloud Infinite Scale already implements most components of the Decomposed FS design as documented by ownCloud. And the developers are anything but out of ideas to improve the concept further. The only instance taking control over a Decomposed FS instance created by ownCloud is, well, ownCloud. The vendor has the luxury of some freedom to experiment and extend the Decomposed FS feature set.

Accordingly, new features are added to decomposed FS regularly, such as the ability to decouple the Decomposed FS namespace completely from the actual payload of data stored in files in oCIS. The feature has meanwhile landed in an extension of the oCIS storage driver named after the Amazon S3 protocol because it enables file storage on separate and distinct storage devices, even on devices that are not locally present. The local blobstore, which just stores the file's contents, can be replaced by a remote object store. This is where S3 makes its debut in the setup. If only the metadata needs to be stored locally and S3 is the backing storage, the user is, in fact, already equipped with seamlessly and endlessly scalable storage.

oCIS, with the oCIS driver being faster and more flexible, is not enough yet for the vendor to rest on its laurels. New features are already planned for the driver, including one designed by the ownCloud folks that is nothing short of spectacular. In future releases, ownCloud intends to do away with the requirement of storing files on a POSIX-compatible backend.

To understand why that makes sense, another look at POSIX helps. POSIX was designed to offer various guarantees when accessing the file system. These include consistency guarantees on reads and writes when renaming files and overwriting existing ones. However, many of these operations do not even occur in ownCloud setups. With ownCloud maintaining its own file system on the storage backend, overwriting files doesn't mean replacing contents on the storage device with newer content. Instead, a new blob is added, and the existing symlink is changed to point to the new file. POSIX guarantees would not be an issue if they weren't consuming performance that would otherwise be available to the user.

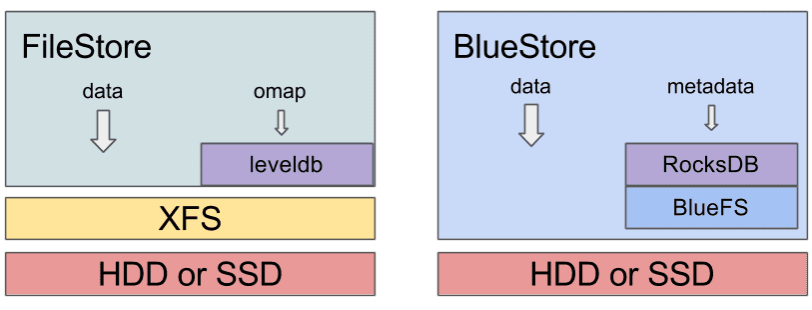

ownCloud folks are not the first to run into this issue. The developers of the scalable object store RADOS, the heart of Ceph, have long used XFS as the on-disk format before giving up on it and creating BlueStore (Figure 4). BlueStore does away with the need to have a POSIX file system at all on the physical storage device. Instead, Ceph simply stores the name of its objects and the physical storage address in a huge key-value store based on RocksDB. ownCloud has already expressed intentions to steer Decomposed FS in a similar direction. In this setup, there is no longer a need for a local file system. Instead, details on existing files and their paths are also stored in a large RocksDB key-value store. Even the need for a local symlink tree to iterate through is removed in such an environment.

Figure 4: Ceph has long done away with using POSIX for its on-disk file scheme. It resorts to RocksDB and a minimalistic self-written file system instead. The Decomposed FS architecture of ownCloud will soon allow it to follow suit (Markus Feilner and Martin Loschwitz, CC BY-SA 4.0).

In addition, oCIS maintaining its own file system namespace brings several major advantages compared to a simple, POSIX-compatible file system and will allow for additional features to be implemented. For instance, ownCloud could handle its files in a Git-like manner with different versions of the same file being present. With access realized through a key-value store, information about previous versions of the same file are easy to store.

Summary: oCIS for the masses

The introduction of Decomposed FS and the ability to decouple the actual file storage from the file system metadata are huge game changers from the oCIS point of view. Many home users will likely still use a single disk as a local oCIS storage backend and simply benefit from the increased performance of Decomposed FS. In stark contrast, institutions and companies will love that running oCIS in scalable storage solutions has already become much easier thanks to the new feature. Additional improvements to be implemented in the near future will, if they make it into the stable oCIS distribution, increase the versatility of oCIS even further. An ownCloud instance that writes its file blobs directly into Ceph could be very interesting for SMBs–after all, the times when even small Ceph clusters were hard to build have long gone. One way or another, the development of Decomposed FS strengthens oCIS and makes it available to a much broader audience.

1 Comment