At the sold-out DockerCon on Monday and Tuesday of this week, there were plenty of big announcements. But the biggest of which was Docker going 1.0. Whether or not it now meets every need for production workloads is a hotly-debated topic, but there's no doubt that this milestone is an important step in getting Docker into the datacenter.

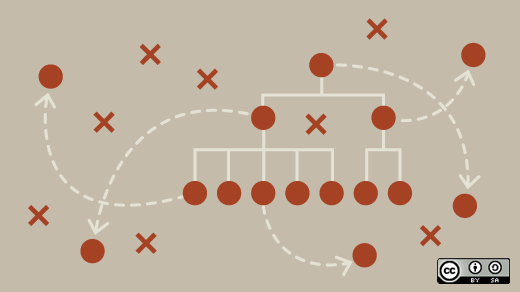

So what is Docker, anyway? Docker is a platform for Linux containers that is designed to make it easy for developers and system administrators to create and deploy distributed applications. Docker makes packaging all of the parts of an application—the tools, configuration files, libraries, and more—into a much simpler task. It's a bit like a virtual machine, conceptually, allowing for the segmentation of a single powerful machine to be shared with many applications with their own individual specific configuration requirements, and without allowing those applications to interfere with one another. Except unlike a virtual machine, applications run natively on the underlying Linux kernel, and each is just very carefully segmented from one another and from the underlying operating system. Need more? Here's a quick video to give you a little additional background.

So containers are great—they're fast, efficient, easy-to-use, and lighweight. Will containers replace traditional virtualization? Well, yes and no. Containers are a great option for development of new applications, and for porting some older applications. But the world is still running a lot of legacy applications that will never be made to run in a Linux container, either because of specific application requirements or because of the needs to maintain existing support protocols. And unlike containers, virtual machines offer the ability to run non-Linux guests, should that be a requirement of the application. But this shouldn't dampen your enthusiasm for Docker and Linux containers becoming an important part of application scaling in the near future.

The 1.0 release of Docker brings a lot of improvements that make it ready for a lot of developers and admins to be able to make the jump. For example, networking has greatly improved, and containers can now connect to host network interfaces directly, without the need for bridging on the host operating system. It also plays nicely with SELinux, allowing for much better security implementations. And of course, many bugs have been ironed out in the process as well.

Docker is going to be an important tool for OpenStack administrators to familiarize themselves with, as it rises to stand beside traditional virtual machines in OpenStack clusters. Linux containers can be launched either independently through Heat, allowing for configuration and orchestration options to be developed here, or through Nova, treating containers as if they are another type of hypervisor through a specialized driver. What works best for you will depend on your exact use case.

Want to learn more about how OpenStack and Docker can work together? Check out this session video from the OpenStack Summit in Atlanta last month, which includes a brief introduction to the concepts along with some best practices for deployment.

Comments are closed.