The Ohio Lottery is now 500+ million. $700 million has already been taken in on this drawing, not a bad for the state. Lotteries work and Vegas was built on this simple notion: we believe we can beat the odds, we all believe we are a little special.

Part of our notion of being special, of our ability to beat the odds comes from our notion of our own narrative, our own story of our life in which we take a starring role. If we can imagine it,

it seems more plausible and more possible. Economics Nobel Prize-winner Daniel Kahneman calls this the "Plausibility vs. Probability" in his latest book, Thinking, Fast and Slow. Our minds tend to turn plausibilities into probabilities, inflating and overweighting both our chances of success as well as our risk of losses, depending how risks are framed.

Kahneman points out that he simple fact is, we live in a probabilistic world and we'd all make better decisions if we made decisions over the long run with perfect rationality and decision-making based on the long term, but it's not easy. We're not wired for it.

The fact is, we're all very bad at making statistical decisions, including doctors and patients, and it costs us. Even those well trained in statistics are susceptible to these and many other types of statistical errors.

Part of physician training is to make them ultra-confident and infallible. Who has time to argue when someone's life is on the line?

Yet physicians consistently misinterpret statistics. It's been well-documented that we and our doctors will make very different decisions based on whether an option has a 90% survival rate vs. a 10% mortality rate, yet the choices are the same.

Or take this classic example in I.C. McManus's "The Arithmetic of Risk:"

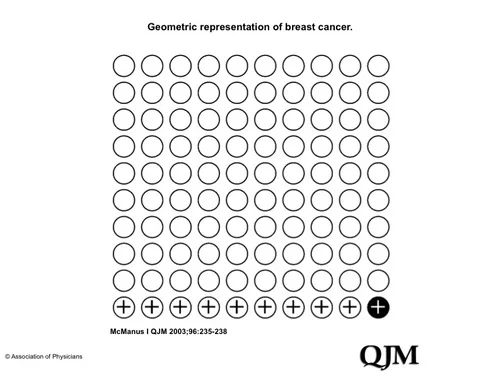

You are asked to advise an asymptomatic woman who has been screened for breast cancer and who has a positive mammogram. The probability that a woman of 40 has breast cancer is about 1%. If she has breast cancer, the probability that she tests positive on a screening mammogram is 90%. If she does not have breast cancer, the probability that she nevertheless tests positive is 9%. What then is the probability that your patient actually has breast cancer?

Go ahead, scratch out some numbers....

It's not easy.

If you're like most doctors, you estimated that the woman who tested positive on a mammogram has about a 90% chance of having breast cancer. But you (and me) and most doctors are way off.

The correct answer is 10%. Think of 100 women. One has breast cancer, and she will probably test positive. Of the 99 who do not have breast cancer, nine will also test positive. Thus a total of ten women will test positive. Now, of the women who test positive, how many have breast cancer? Easy: ten test positive of whom one has breast cancer—that is, 10%.

When it's framed the right way (in terms of a narrative rather than pure mathmatics), and we have a little bit of the right kind of framing in our decision support, the answer's easy.

These poor decisions lead to unnecessary treatment and increased costs. In a related example:, the majority of physicians draw unfounded conclusions from screening statistics and this leads to increase in costs, an increase in information, yet no improvement in results: https://www.annals.org/content/156/5/392.extract

How we frame the question and how we calculate the odds has an enormous influence in how we get an answer, and what recommendation we might make.

The opportunity in health care, reiterated by several physicians on the panel with Esther Dyson at the eCollaboration Forum at HIMSS12, is that we need to provide both physicians and patients to tools to help them make better decisions, but ultimately they are their decisions to make.Much of accountable care models will hinge on both physicians and patients abilities to make the right decisions and how we can assist them in the process of interpreting statistics.

That's why we need better decision-making tools for physicians and patients, to help us make decision based on probabilities rather than plausibilities (our legal system, for better or for worse, is built upon plausibilities, which leads to other bad health care decisions, but that's a story for another time). We expect physicians to be infallible and certain, and their training often reinforces this mindset, but the right apps and dashboards could do a better job of interpreting and making recommendations based on statistics. Read Kahneman's book and you're unlikely to trust an expert ever again (without a little statistical help.)

As the saying goes, we are all special, just like everyone else. As we enter an age of Big Data, we'll need more help than ever going from data to meaning (with the right framing) to decision-making in practice. The success and failure of these new models may will hinge on our ability to do so, and may well be the new basis of competition in health care.

Read more on Leonard's blog:

- Ideas Are Cheap ...Until they're shared

Comments are closed.