Professionally and personally, we are an increasingly digital culture. The physical distribution channels for information, data, news, stories, and conversation we learned from as young minds are waning in popularity. Books, TV, tapes, CDs, radio, newspapers, and magazines are in decline as the music, entertainment, business information, personal conversations, and current events we demand get delivered to us in an inbox, feed, app, or social network.

But where will it all go when we're done with it? There is a disturbing lack of serious discussion among content producers and consumers alike about long-term preservation of this electronic residue that we're all creating these days.

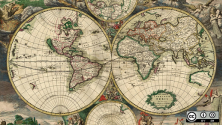

Who among us hasn't had a little thrill of the adventurer, the explorer, the treasure-hunter opening up a dusty box of old letters or photographs? Of unearthing an artifact that showed how our great-grandparents lived? Of piecing together a sense of what our town or city looked like and sounded like decades ago? How will generations in the future be able to piece together our lives, worries, hopes, and dreams when so much of it exists only in bits and bytes?

I hope this recent piece in opensource.com on the importance of open standards will be an ongoing discussion theme, as open source and open standards together provide one of the few realistic solutions to this escalating problem of digital preservation. The content management technology field, where I've spent most of my career, needs to escalate this debate. In a space currently dominated by proprietary technologies, managing the long-term preservation, provenance, and accessibility of digital content is often downplayed or ignored.

Three critical elements must come together to really allow public sector, cultural institutions, schools, businesses, and family and civic groups to ensure the same level of historical evidentiary preservation in the digital age that we all took for granted in the paper age. These three elements are: open standards for file formats, open standards for interoperability, and open source for content repository and retrieval platforms.

Open standards for file formats ensure portability of the content artifact (often with relevant metadata or context) across authoring or viewing applications. The emergence of the PDF/A standard, for example, as an ISO-managed specification outside the corporate control of a sole vendor happened because of the reluctance of many public sector agencies to accept a proprietary format and risk losing the opportunity to preserve essential content for the long term. Characteristics such as device independence (hardware or software), self-contained and self-documenting, unfettered from file protections, specification availability, and widespread adoption to ensure its longevity, all describe an open file standard. As more industry adopts software to guide machinery and processes—aerospace, automobiles, navigation systems, control systems, and sensors that monitor our public and commercial infrastructure—we need to be able to preserve and rebuild these essential electronic systems. The lifespan of our planes, cars, railways, dams, and waterways are measured in years, if not decades. Preservation of the code, manuals, documentation for systems that power our modern society for repair, recompiling, updates, or translation is essential.

Open standards for interoperability have had mixed success in the world of content management. However a new OASIS standard ratified in May 2010 has revived optimism that both open source and proprietary vendors can establish a meaningful common ground for basic electronic document management functional areas. The new “Content Management Interoperability Services” standard (CMIS) is already being adopted by many established and emerging vendors, opening the door to intelligent and efficient harvesting of information silos that were built inside enterprise over the last 10-20 years. Digital content can now be used and consumed by business applications regardless of which content management repository a company has chosen to deploy. Content can flow to the users when and where needed to get work done.

But open standards must have the safety net of open source to really succeed in the long term as viable digital preservation alternatives. Access to the underlying code to rebuild, recompile, or refresh the tools to store, view, consume, and retrieve the preserved content is essential to letting digital content be used and appreciated by future generations.

Today’s digitally stored knowledge will have meaning beyond our own human lifespans. We all love to know where our institutions, families, and communities came from and how they've evolved. Let's not cheat our great-grandchildren out of that experience. Marketers sometimes use the buzz-word “future-proofing” to explain to prospects and customers how open source can be a safety net against the whims of the merger-and-acquisition-crazed technology industry. Beyond the buzzword, let's think about the kinds of documents, images, recordings, and information we experience. It's up to us to choose open source and open standards to ensure this current era of information overload doesn't become known as the Dark Ages 2.0.

Opensource.com

What to read next

1 Comment