The Blender Conference has become a fantastic showcase not just of attractive art and animation, but also unconventional uses of Blender and open source software.

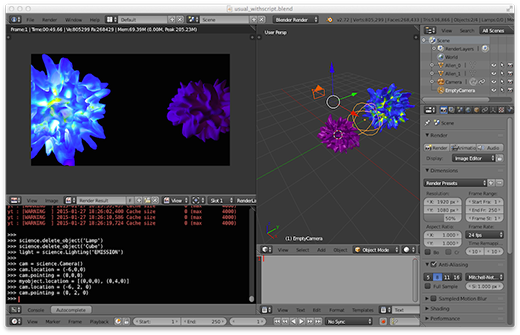

One of the talks that really caught my eye this year was Dr. Jill Naiman's about her AstroBlend project. In short, she's created an add-on for Blender that helps visualize and analyze astrophysical simulations and data. She talked about using Blender on data snapshots that weigh in at 15-25TB.

One of the talks that really caught my eye this year was Dr. Jill Naiman's about her AstroBlend project. In short, she's created an add-on for Blender that helps visualize and analyze astrophysical simulations and data. She talked about using Blender on data snapshots that weigh in at 15-25TB.

Jill is also a bit of a maker, focusing on "blinkie things" and wearable technology. Her slides are available on the AstroBlend website and animated videos produced with AstroBlend can be found on the AstroBlend YouTube channel.

On the face of it, Blender seems like a strange choice for visualizing astrophysics data. What were your reasons for choosing it over other packages or even rolling your own based on existing libraries?

There were a few things I liked about Blender (beyond the fact that starting this project I had no actual experience with 3D visualization and Blender was one of the first things to pop up on Google). When I started looking at what Blender could do, I got excited about the possibilities of combining art and science easily—for example, using an artistic model to put your simulation in scale or providing an artist with a physically motivated simulation of a galaxy, planet, etc.

This was all motivated by a cool public lecture I saw at a conference where the speaker had a bunch of interactive movies, which of course I wanted to be able to do. However, when I asked how he made them, he told me he just gave all of their data to their "million-dollar" studio, which, as a grad student, I had no access too. It seemed that Blender could provide a lot of these tools. It is of course free, so that piqued my initial interest. As I messed with it more, I realized that it could be easily meshed with another popular Python-based data analysis package, yt.

So now with yt+Blender you can directly access the data from multiple types of astrophysical codes. Currently, I'm building a GUI for astrophysical data that allows for loading of multiple datasets from different sorts of codes and direct interaction in 3D, which is something I haven't seen in other astrophysical visualization packages, so that is pretty neat. Finally, I'm a firm believer in not reinventing the wheel—Blender already had a lot of what I wanted in it, so I didn't have to spend a lot of the time I was supposed to be writing papers creating a whole new visualization code.

I see that AstroBlend has a public repository on BitBucket. It's very cool that everything appears to be done in Python. Have you made changes to Blender's core to make AstroBlend possible? Is there anything that you'd like to see added to Blender's source that would make things in AstroBlend easier?

I have yet to modify the Blender source code. I'm sort of avoiding it as long as possible. The idea is to have something that is easy to use—a young scientist that has just started to generate 3D data can simply download the latest version of Blender, plop in AstroBlend, and they are off and analyzing their data. I wouldn't want them having to worry about having to find a "non-standard" build of Blender.

Hopefully this ease of access can be extended to be easy for a young artist just starting out as well, but admittedly I've been biased to focusing on the scientist angle. I'd love to see some easier access to the volume rendering structures so that one can just load volume data directly from a simulation output file and push it to the voxel data structure in Blender. Right now I'm playing with a workaround that requires overwriting the voxel data structure with a c-types structure, but that makes me a little nervous and it would be great to just pass a pointer to the volume data that is loaded in memory. I've also talked to the yt folks about how to more integrate their volume rendering with Blender's, and I might hit some road blocks there just using Python, but I'm not sure about that one yet.

In addition to Python and Blender, are you using any other open source tools or libraries in conjunction with AstroBlend? For instance, there's the OpenVDB library for visualizing volumetric data.

Besides yt, which I mentioned a bit before, nothing else really. I've peaked at OpenVDB, as well as some WebGL stuff, but its definitely on the "Hey, that's cool. I'll check it out when I have a minute," side of things, not the actively developing side of things.

Using the visualization tools you've created in AstroBlend, what kinds of interesting things have you been able to see or discover that might otherwise be difficult without it?

Admittedly, things are still in the development phase, so I've just started using it as a tool for papers and the like. I've predominately used it as a teaching tool until recently, and as a teaching tool it's been great because it allows students to quickly visualize something and learn the basic gist of what is going on in a relatively large and complicated simulation.

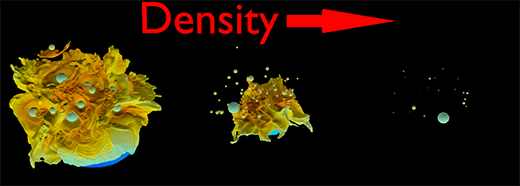

Right now, a student is finishing up a paper on how stellar winds interact in large clusters of stars to see how much material is retained (and how much we expect to observe in these systems). Early on we did some three-dimensional isodensity surfaces of her simulation and it was useful for seeing where shocks were forming in her cluster and where the hot vs. cold gas was residing. We are using a few of these surfaces for a figure in her upcoming paper, so that is exciting.

I've also used it to understand the overall context of a simulation I'm doing. For example, I combined someone's artistic model of a galaxy, some observational data, and my own simulation data to get a better picture of the dynamics of the dwarf galaxies that orbit our Milky Way. It was a great way to get at the scales of things that I find are hard to grasp in two dimensional plots. I think when I finish up the GUI aspect, that will really be killer since it will allow for interaction with one's data in a 3D space in a novel way and should make some of the analysis plots easier to understand in a 3D context.

What's next for AstroBlend? Is it complete at this point, or are there additional features that you're interested in adding?

So not complete! I'd like to make it easier to install, either with or without having to install yt for direct interaction with the data. I think making it a Python package and a Blender add-on will do the trick, so I just have to get to it. I'll probably push for that first, and then finish up the GUI aspect, and then hopefully get some preliminary volume rendering going. I'd love to play around with incorporating some of yt's volume rendering as well. Oh, and Cycles! I need to support Cycles rendering. I guess there's a bunch of stuff. I have a long list. But in the near future, I think these few things are what I'll focus on.

Blender is a free and open source 3D creation suite. The Blender Conference is an annual event held in Amsterdam for developers, designers, and enthusiasts to learn more about Blender techniques, features, and tools.

Blender is a free and open source 3D creation suite. The Blender Conference is an annual event held in Amsterdam for developers, designers, and enthusiasts to learn more about Blender techniques, features, and tools.

2 Comments