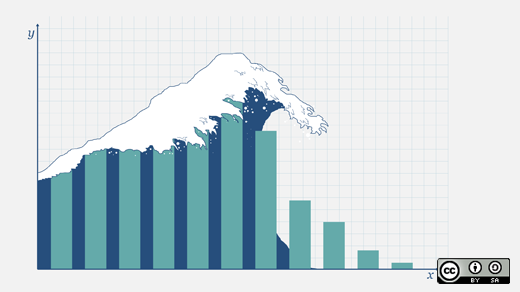

What can you learn from numbers alone? That is the question many in the open source community have grappled with when looking at comparative values of commits, committers, community sizes, and more between projects.

One of our community moderators, Robin Muilwijk, mentioned to me recently that he saw that OpenStack had just passed the 100,000 code reviews milestone. It started me thinking about what this milestone meant, whether it was truly significant, and what other milestones might be nearby that might say something about the project. In this case, the number doesn't reflect every commit made, because the reviews don't go back to the very beginning of the project, but is it still significant?

First, a disclaimer: If you're using statistics about a project as the sole barometer of a project's success or failure, you're probably doing it wrong. Numbers don't tell the whole story. Too often when trying to compare similar statistics from separate projects, nuances can be lost. And raw statistics are too susceptible to manipulation, intentional or not, to be taken without some context.

Haven given that warning, I still think there's good value in project statistics. They say something about trajectory, and when used in conjunction with solid knowledge of why the numbers are what they are, they can tell a good bit about comparative success. And they can be inspiring. "Look what we've done" you can say to your community, as you provide them with the raw data about what they've created. They can also say something about the relative participation in a project, as with Chuck Dubuque's look at how to gauge the contributions of the various corporate contributors to OpenStack.

And at the very least, they're interesting to look at! So here are a few numbers about the OpenStack community's efforts. Are they high or low compared to your expectation? Are they meaningful or just fluff? Ultimately, that's up to you to decide.

1,766,546 lines of code. Sort of. These numbers are from Ohloh as of three month ago. More recent numbers are available by looking at each project individually, and these don't count related projects in StackForge. And then there's that old quote attributed to Bill Gates to consider, "Measuring programming progress by lines of code is like measuring aircraft building progress by weight."

51,181 followers on Twitter. What can you read into social media counts? Well, that depends. Are projects with more followers on social media more popular, or are they just more popular with the segment of the population more likely to use social media. With a project like OpenStack that isn't oriented towards consumer use, these likely represent folks who are actively interested in the project.

38,272 emails in the OpenStack developers listserv, up from around 10,000 a year ago. Of course, email isn't the only way that project communication and coordination happens, and like reviews, doesn't necessarily measure activity from the very beginning of the project.

17,020 community members who have registered for membership with the OpenStack Foundation. Not every one of these is committing code, but likely a good chunk of those who take the time to sign up are actively participating in the community in some way.

4,500+ attendees of the most recent OpenStack Summit in Atlanta. This is at least a 50% increase from the roughly 3,000 who had attended the Portland Summit a year earlier.

As with any article full of stats like these that update daily, all of these numbers are of course subject to change. It's just a snapshot in time. What other numbers are worth following? Let us know in the comments.

Looking for more? Scott Wilson looked at relative values of project statistics in a previous piece entitled "How to evaluate the sustainability of an open source project" where he spoke to trends in code, community, and release.

Comments are closed.