Sustainable open source projects are those that are capable of supporting themselves. Simply put, they are able to meet their ongoing costs.

However, from the viewpoint of selection and procurement, sustainability also means that the project is capable of delivering improvements and fixing problems with its products in a timely manner, and that the project itself has a reasonable prospect of continuing into the future.

Elsewhere on our site you can find articles describing some of the many formal approaches to evaluating open source software as part of the Software Sustainability Maturity Model.

However, there are also informal methods for evaluating the sustainability of an open source project that may be useful where investment in a formal methodology is not justified, for example if the number of software evaluations your organisation undertakes is fairly small and infrequent.

In this document we’ll take a look at some of the key indicators of sustainability you can evaluate using basic web research techniques and publicly accessible information:

Note that these are by no means the only factors to look at when evaluating software; see also OSS Watch's Top Tips for Selecting Open Source Software and Decision Factors for Open Source Software Procurement.

Code activity: contributions and contributors

For a project to be sustainable it must have contributors, and its codebase needs to be evolving. You can track this by looking at the project’s revision control system and looking at the pattern of contributions. One handy tool for doing this is the Ohloh website by Black Duck Software.

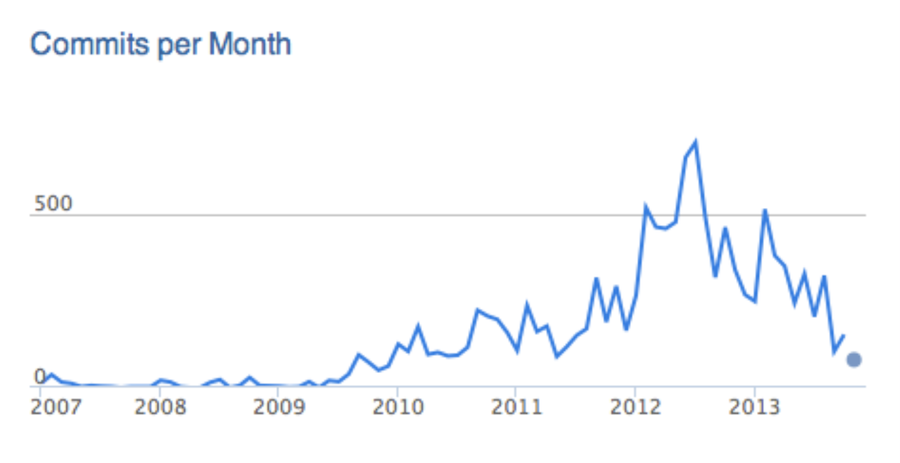

Here’s the contribution history for CKAN, for example:

Software projects tend to go through distinct cycles of activity; there is often a flurry of development at the start of a project as the framework and main features are put in place, then the pace tends to slow down as the project matures and effort shifts onto fixing bugs and improving performance. Later on there may be another flurry of activity as there is a major feature addition, or a move onto a new framework.

So its quite normal to see fluctuations in the number of contributions to the codebase of a project, and for some projects a declining number of contributions is actually a good sign if it indicates stability and maturity.

Again, looking at CKAN we can compare the graph above with the measure of the number of contributors to the project:

So the picture here is that the overall number of developers contributing to the project is steadily increasing, but the pace of development is slowing down after a peak. This is a good sign as it indicates that the project is picking up developers even as it moves into a more stable phase.

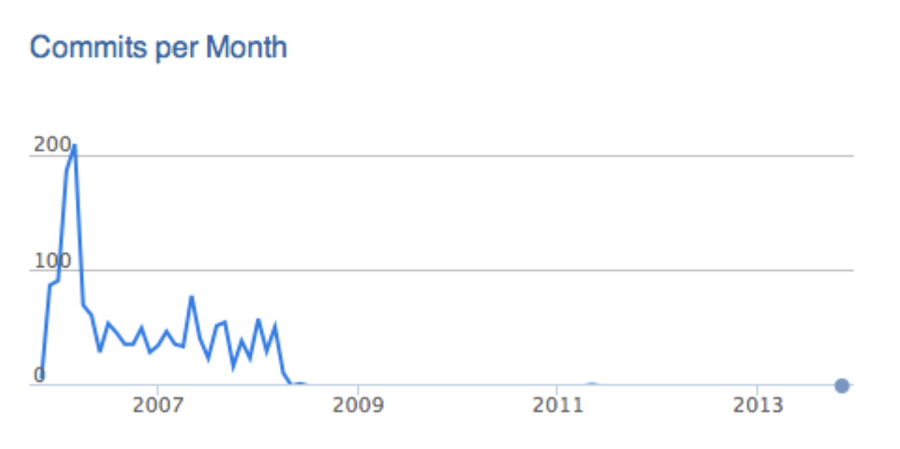

However, here’s another example, HtmlCleaner.

This is a project that has been "rebooted" after lapsing into inactivity for about a year. The project itself is quite mature. However, anyone evaluating the project in 2012 would have been correct to be concerned for its sustainability.

Finally, here is a good example of the limitations of this approach:

From this it looks as if the project pretty much died towards the end of 2008 and has seen no activity since. However, the project (OpenSAML in this case) is not in fact dead! The project simply changed the location of its source code repository and this hadn’t yet been reflected in its profile on the Ohloh website.

If you take a look at the project’s own website you can follow a link to its repository and see code contributions from well after this date. So it’s important to not just take these kinds of graphs at face value, but to check whether it reflects what is happening in the revision control system itself.

Release history

Projects take very different approaches to making releases, so it’s hard to generalise between projects. Some may commit to make regular releases, and if there are sustainability issues this will be evident in disruptions to the cycle. However, many projects make releases as and when they feel ready, and so releases will not follow any particular pattern.

Because of this the release history can tell a less useful story than looking at the developer community that creates the releases.

One exception is if there have been no releases for some time despite a good level of developer activity; this may indicate that there are governance issues within the project that are preventing it making releases; the only way to find out is to go into the project communications and see if there is an issue.

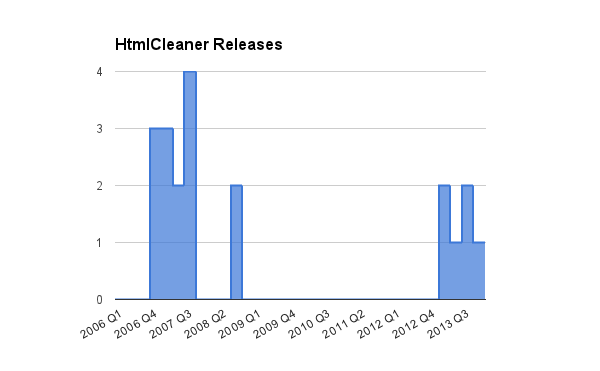

Below is the release history for HtmlCleaner from 2006 to 2013. This largely mirrors the activity pattern in the project, but does also indicate that releases have been made on a more regular basis over the past year.

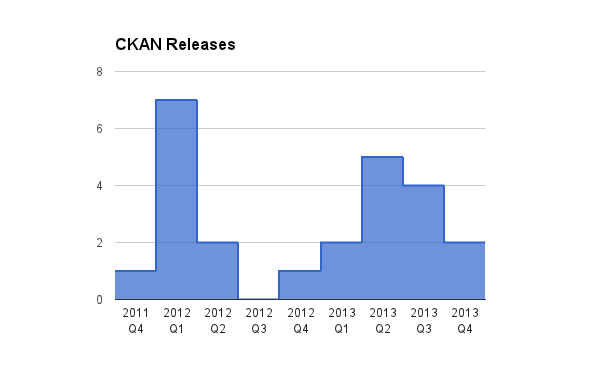

Here is the release history for CKAN for 2011-2013, showing the project create a new release in 8 out of the last 9 quarters:

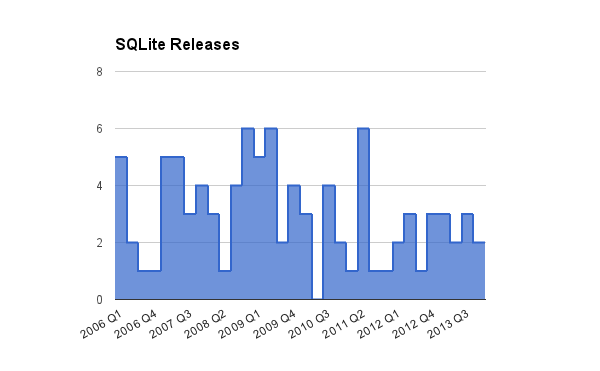

Similarly, the SQLite project has made frequent releases from 2006-2013:

Note that, unlike activity data, release histories often aren’t available in a way that makes them easy to analyze. You may need to input lists from web pages, or obtain the dates when source code was “tagged” with a particular version in the source control system.

User community

Software isn’t sustainable without users. Users drive the functionality, identify the bugs, and shape the direction of a project to meet their needs. It’s often not obvious how to determine the size and investment of the user community in a software project, as the engagement tends to vary depending on the kind of project.

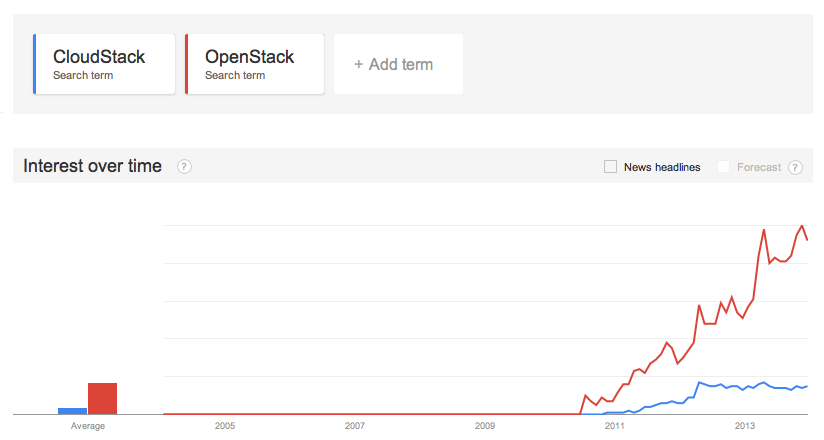

A good starting point is to use a search engine to get an idea of who is talking about the project. A useful tool is Google Trends, which lets you see how many people are searching for information about a topic. For example, we can compare searches for "CloudStack" vs. "OpenStack":

You can also use search engines to uncover blog posts and articles discussing the project. Similarly, you can follow the project’s hashtag on Twitter or related activity on LinkedIn, Facebook and Google+. Does the project have plenty of "fans?" Or does it generate plenty of tweets from users?

Note that even if there are lots of complaints from users on social media this isn’t necessarily a negative sign – it indicates at the very least there are enough people who care enough about the product to use it even though they have a problem with it!

Another tool to use is the project’s issue tracker. A healthy project should have a good number of issues with it – though this may sound a bit counter-intuitive. Basically, all software has bugs and areas for improvement. A healthy user community will continually uncover those bugs and identify the areas where the software can improve. Of course, some projects are very mature and may have relatively little activity on bug trackers.

For newer projects, however, if there are no bug reports in the tracker this can indicate either a fantastically responsive developer community that closes the issues as soon as they appear, but it more likely indicates a project without enough users around to find any problems with it.

Again, be careful about false negatives: there may be no bugs visible because the project has moved from using one system to another at some point. For example, ePrints has its legacy issues in Trac, but new issues are reported on Github.

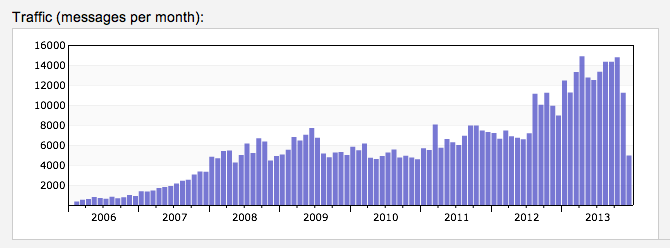

You can also look at user community tools provided by the project to get an idea of sustainability, such as user forums and mailing lists. Many of these provide built-in analytics, or you can use third-party sites such as Markmail.

For example, if we look at messages on the Hadoop mailing lists on Markmail, you can see the increase over time of mailing list activity for the project:

Again, be careful as communities can easily move from one tool to another – for example there may be a legacy mailing list from when the project started with no posts after a certain date, but new users may actually be interacting with the project via its Facebook or Google+ page.

Taken together, this should give you a reasonable idea of the health of a user community. You can take a formal approach of actually deriving numbers based on these various tools, but given the diversity of sources a qualitative approach seems more realistic.

Longevity

Projects sometimes follow a natural cycle of being created, having a flurry of high activity, before moving into a steady productive phase, before eventually dying as its replaced by new projects in the same space with a more powerful technology base.

However, some open source projects are also fantastically long lived, such as the Apache web server.

When this happens, the effect tends to be somewhat self-sustaining as more conservative organisations adopt the software, and retain use of it for longer, resulting in longer-term investment in its sustainability.

If a project has survived long enough to weather several technology replacement cycles, this is a good indication it’s going to be around for years to come. The warning signs are where there seems to be steady migration from the project community to another; eventually even a large, mature project will start to suffer if this happens.

For newer projects it’s harder to judge; if they are in the phase of rapid development at the onset of the project there is often no real indication of whether the project will fizzle out and be replaced by the next big thing, or settle into a long and productive life.

For example, there was some debate when Node.js first appeared as to whether the very lively initial "buzz" around the project was going to be short-lived. However, the project is now three years old and shows signs of moving into a more stable phase.

For newer projects, the best course of action may be to mitigate the risks rather than avoid them; for example, developing a good exit strategy, investing in interoperability and open standards supported by the project, and conducting regular reviews of how the project is evolving compared to its competitors.

Ecosystem

As well as looking at the developers and users around a project, it’s also important to consider the companies that engage with it. This includes hosting and support providers, consultancy and customisation services, and companies that bundle the software with other products as part of solutions.

The ecosystem around a project is an important indicator as clearly such companies have a strong interest in the sustainability of the software themselves.

The metaphor sometimes used is that of a "coral reef"—a sustainable project accumulates partners and providers of increasing specialisation. Likewise, if there are signs of service companies moving away from supporting the project this may be an indicator of underlying problems.

Not all software projects by their nature lend themselves to this kind of ecosystem, but where there are companies providing services it’s useful to look at their diversity. For example, are they only operating in one or two countries? Are they a mix of smaller and larger companies? Are they all targeting a single industry sector?

Putting it all together

These are by no means the only factors when considering software sustainability, and if you are conducting a large number of evaluations then adopting a formal methodology and set of tools may be a worthwhile investment. However, for ad-hoc evaluation this briefing note should provide a good starting point.

Originally posted on the OSS Watch website. Reposted under Creative Commons. Authored by Scott Wilson.

2 Comments