The first task any accomplished technical writer has to do is write for the audience. This task may sound simple, but when I thought about people living all over the world, I wondered: Can they read our documentation? Readability is something that has been studied for years, and what follows is a brief summary of what research shows.

Studies prove that people respond to information that they can easily understand. The question is: Are we writing content that the average person can easily read and understand? If people are not connecting with our content, one reason could be that we are writing "over their heads," which happens more often than you might think. In an effort to sound superior, intelligent, or as experts in our fields, many people will overwrite content, or use big words to make the most printed material space.

A simple way to check your document to see whether it is easy to read is to use a readability test. Many different tests have been created for this purpose, and three of the most popular are:

Popular readability tests

Flesch Reading Ease Test

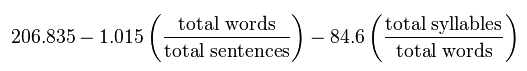

Rudolf Flesch, author of Why Johnny Can't Read: And What You Can Do About It created the Flesch Reading Ease Test as a way to further advance his belief that American teachers needed to return to teaching phonics rather than sight reading (whole word literacy). His work and advocacy for reading and phonics were the inspiration for Dr. Seuss to write The Cat in the Hat. This test tells us how easy it is to read the text. The algorithm is as follows:

Figure via Wikipedia. CC BY-SA 3.0

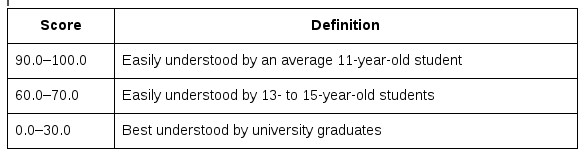

The resulting score is interpreted as follows:

Table via Wikipedia. CC BY-SA 3.0

What does this mean?

- The lower the score, the harder the text is to read

- 65 is the "Plain English" rating

How does this score measure up to well-known publications? [1]

- Reader's Digest: 65

- Time Magazine: 52

- Harvard Law Review: >40

Flesch-Kincaid Grade Level Readability Test

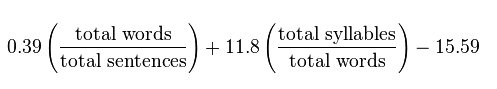

The Flesch-Kincaid reading test is the result of a collaboration between Rudolf Flesch (mentioned above) and J. Peter Kincaid. J. Peter Kincaid is an educator and scientist who spent his time working in academia or researching with the U.S. Navy. J. Peter Kincaid developed his version of the readability test while under contract with the Navy in an effort to estimate the difficulty of technical manuals. The Flesch-Kincaid Grade Level Readability Test translates the test to a United States grade level, which judging whether the material is readable by others easier. The algorithm is as follows:

Figure via Wikipedia. CC BY-SA 3.0

The result corresponds to a U.S. grade level, so once the score is calculated, we know who can understand our writing. For example, President Obama's 2012 State of the Union address has a grade level of 8.5; however, the Affordable Care Act has a readability level of 13.4 (university or higher). The results of a the readability of a few popular books may surprise you:

- Goodnight Moon (a children's book by Margaret Wise Brown): 2.8

- The Old Man and the Sea (by American author Ernest Hemingway): 4.1

- Harry Potter and the Deathly Hallows (final novel in J.K. Rowling's Harry Potter series): 7.8

- Good to Great: Why Some Companies Make the Leap... and Others Don't (a management book by James C. Collins): 10.4

Gunning Fog Index

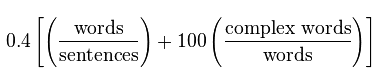

The Gunning Fog Index was created in 1952. The algorithm is as follows:

Figure via Wikipedia. CC BY-SA 3.0

This index is not perfect as some words (such as university) are complex but easy to understand, whereas short words (such as boon) may not be as easy to understand. Given that, the results can be interpreted as follows [2]:

- A fog score of >12 is best for U.S. high school graduates

- Between 8-12 (closer to 8) is ideal

- <8 is near universal understanding

Why should I care about the readability of my writing?

If our writing is too hard to read, then no one will want to read it. The sad truth is that approximately 50% of Americans read at an eight-grade level. The higher the grade level our writing is, the fewer people can read it. If we struggle to read something, our experience with the content will be negative, and this negative experience makes us less likely to recommend the content to someone else. Have you ever recommended a book you did not enjoy reading? The same goes with documentation.

How do I calculate the readability of my writing?

There are several ways to calculate readability. The easiest way to calculate it is within a word processor or editing tool. For example, Publican is a publishing tool based on DocBook XML. Publican version 4.0.0 included the addition of a Flesh-Kincaid Statistics Info feature, which lets users run the following command:

$ publican report --quiet

This will generate a readability report.

If you are using vim as your text editor, vim-readability plug-ins can be downloaded and installed from GitHub (thanks to Peter Ondrejka). A similar plug-in, gulpease, is also available for gedit. To check readability without using a plugin, copy and paste the text at Readability-Score.com.

Final thoughts

Keep it simple, sweetheart! The easier our documentation is to understand, the more people will use it. In case you're curious, this article has a readability of:

- Flesch: 68.5

- Flesch-Kincaid: 6.9

- Gunning Fog: 9.3

Once we know the readability of our writing, we can simplify it, if necessary. I will outline ideas for doing that in my next article. Stay tuned!

8 Comments