So you started your own open source project. You had a great idea, you actually took the time and energy to create a project on GitHub, and you even went so far as to set the right frameworks in place to allow other people to contribute to your community. Awesome!

So how is that going? Is the project succeeding? Can you see improvements from week to week? Are you getting closer to your goal? Do you have a goal? If these questions make you shuffle around awkwardly in your seat, read on. If you answered all of the questions confidently, read on anyway, because you might find yourself challenging some of your previous assumptions.

As a community manager of a relatively young open source project, I want to share some of our experiences and methodologies we use for measuring the success of our project. These ideas and techniques are a work in progress. Hopefully you can pick up some ideas from hearing what we do, and better yet, share some of your new ideas with us.

Is it all about contributions?

Here's a fact. Most open source projects have very few contributors. Donnie Berkholz wrote a great blog post analyzing about 50,000 open source projects with active communities. According to his analysis, "The vast majority of projects are tiny, having just one or a few contributors." Consider these statistics:

- 87% of projects have five or fewer committers per year.

- Merely 1% of projects have 50 or more committers per year, and a scant 0.1% have 200 or more.

So, assuming you're in the 99% of projects that has less than 50 committers a year, you might want to have other metrics to measure whether your project is on a good trajectory.

AARR! Introducing Pirate Metrics

Users engage with your project on many different levels. There's the guy who might have heard about your project while reading a blog post and stopped there. And then there's the crazy developer who's contributing code to your community three times a day. The point is that in between those two cases there are multiple levels of engagement.

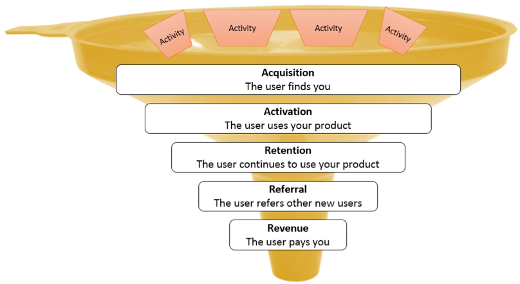

Pirate Metrics is a simple and effective framework that helps you measure the success of your project at each significant level of user engagement. Pirate Metrics speaks about five general levels of user engagement:

At each level of engagement, you carry out activities to encourage user engagement and to move them to the next level. An important part of this process is understanding which activities are effective in encouraging more engagement.

Adopting Pirate Metrics to measure open source success

Pirate Metrics would typically be used for business startup initiatives that generally have the goal of increasing revenue. However, there is no rule that says revenue means money. Maybe the ultimate payment is code contribution to your software or becoming an active member of your community.

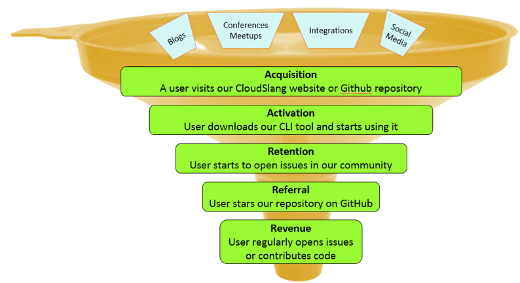

My team at CloudSlang adopted these Pirate Metric definitions to the world of open source, and we use these metrics to measure our success at each level of engagement. So, here are examples for how we define success at each level of engagement:

Notice the activities that are feeding into the engagement funnel. When we publish a blog post or present something at a meetup, we are interested in understanding the impact of these activities on user engagement. For example, a cool blog post that mentions your product may lead to a lot of unique site visits (acquisition), but those same visitors may be less interested in downloading your product (activation). On the other hand, when you present your project at a very relevant online meetup, you may have fewer website visits (acquisition), but a much higher percentage of downloads of your product (activation).

Measure and learn

Each week, we look at multiple metrics in each level of engagement and document the data (e.g., number of downloads). We document the unique outreach activities that we did during that same week (e.g., blog posts or new releases) in parallel. This way, we are able to actively measure ourselves each week and learn about which activities are effective in increasing engagement in our project.

Please share your feedback

Like I said, this methodology is a work in progress. I'd be really interested in hearing how other open source projects approach this subject. Please leave your feedback in the comments section, and together we can create a new generation of open source pirates.

1 Comment