I have a podcast on which I chat with both Red Hat colleagues and a variety of industry experts on topics from cloud to DevOps to containers to IoT to open source. Over time, I've gotten the recording and editing process pretty streamlined. When it comes to the mechanics of actually putting the podcast online, however, there are a lot of fussy little steps that need to be followed precisely. I'm sure that any sysadmins reading this are already going "You need a script!" and they'd be exactly right.

In this article, I'm going to take you through a Python script that I wrote to largely automate the posting of a podcast after it's been edited. The script doesn't do everything. I still need to enter episode-specific information for the script to apply, and I write a blog post by hand. (I used to use the script to create a stub for my blog, but there are enough manual steps needed for that part of the operation that it didn't buy me anything.) Still, the script handles a lot of fiddly little steps that are otherwise time-consuming and error-prone.

I'll warn you going in that this is a fairly bare-bones program that I wrote, starting several years ago, for my specific workflow. You will want to tailor it to your needs. Additionally, although I cleaned up the code a bit for the purposes of this article, it doesn't contain a whole lot of input or error checking, and its user interface is quite basic.

This script does six things. It:

- provides an interface for the user to enter the episode title, subtitle, and summary;

- gets information (such as duration) from an MP3 file;

- updates the XML podcast feed file;

- concatenates the original edited MP3 file with intro and outro segments;

- creates an OGG file version;

- and uploads XML, MP3, and OGG files to Amazon S3 and makes them public.

podcast-python script

The podcast-python script is available on GitHub if you would like to download the whole thing to refer to while reading this article.

Before diving in, a little housekeeping. We'll use boto for the Amazon Web Services S3 interface where we'll be storing the files needed to make the podcast publicly available. We'll use mpeg1audio to retrieve the metadata from the MP3 file. Finally, we'll use pydub as the interface to manipulate the audio files, which requires ffmpeg to be installed on your system.

You now need to create a text file with the information for your podcast as a whole. This does not change as you add episodes. The example below is from my Cloudy Chat podcast.

<?xml version="1.0" encoding="UTF-8"?>

<rss xmlns:itunes="https://www.itunes.com/dtds/podcast-1.0.dtd" version="2.0">

<channel>

<title>Cloudy Chat</title>

<link>https://www.bitmasons.com</link>

<language>en-us</language>

<copyright>℗ & © 2017, Gordon Haff</copyright>

<itunes:subtitle>Industry experts talk cloud computing</itunes:subtitle>

<itunes:author>Gordon Haff</itunes:author>

<itunes:summary>Information technology today is at the explosive intersection of major trends that are fundamentally changing how we do computing and ultimately interact with the world. Longtime industry expert, pundit, and now Red Hat cloud evangelist Gordon Haff examines these changes through conversations with leading technologists and visionaries.</itunes:summary>

<description>Industry experts talk cloud computing, DevOps, IoT, containers, and more.</description>

<itunes:owner>

<itunes:name>Gordon Haff</itunes:name>

<itunes:email>REDACTED@gmail.com</itunes:email>

</itunes:owner>

<itunes:image href="https://s3.amazonaws.com/grhpodcasts/cloudychat300.jpg" />

<itunes:category text="Technology" />

<itunes:explicit>no</itunes:explicit>You then need a second text file that contains the XML for each existing item (i.e. episode) plus a couple of additional lines. If you don't have any existing episodes, the file will look like this.

</channel>

</rss>This script builds your podcast feed file by concatenating the header text with the XML for the new episode and then appending the second text file. It then also adds the new item to that second text file so that it's there when you add another new episode.

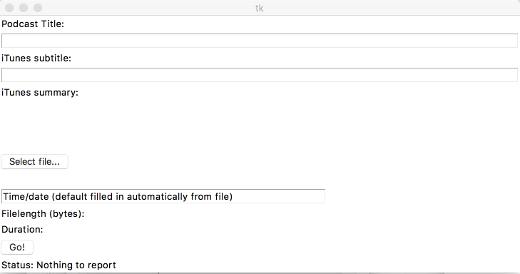

The program uses TkInter, a thin object-oriented layer on top of Tcl/Tk, as its GUI. This is where you'll enter your podcast title, subtitle, and summary in addition to selecting the MP3 file that you'll be uploading. It runs as the main program loop and looks like the following screenshot:

This is built using the following code. (You should probably use newer TkInter themed widgets but I've just never updated to a prettier interface.)

root = Tk()

Label(root,text="Podcast Title:").grid(row=1, sticky=W)

<Some interface building code omitted>

Button(root, text='Select file...',command=open_file_dialog).grid(row=9, column=0, sticky=W)

v = StringVar()

Label(root, textvariable=v,justify=LEFT,fg="blue").grid(row=10,sticky=W)

TimestampEntry = Entry(root,width=50,borderwidth=1)TimestampEntry.grid(row=11,sticky=W)

TimestampEntry.insert(END,"Time/date (default filled in automatically from file)")

FilelengthStr = StringVar()FilelengthStr.set("Filelength (bytes):")

FilelengthLabel = Label(root,textvariable=FilelengthStr)

FilelengthLabel.grid(row=12,sticky=W)

DurationLabelStr = StringVar()

DurationLabelStr.set("Duration: ");DurationLabel = Label(root,textvariable=DurationLabelStr)DurationLabel.grid(row=13,sticky=W)

Button(root, text='Go!',command=do_stuff).grid(row=14, sticky=W)

StatusText = StringVar()StatusText.set("Status: Nothing to report")

StatusLabel=Label(root,textvariable=StatusText)StatusLabel.grid(row=15, sticky=W)

root.mainloop()When we select an MP3 file, the open_file_dialog function runs. This function performs all the audio file manipulations and then returns the needed information about file size, length, and date stamp through global variables to the label widgets in the interface. It's more straightforward to do the manipulations first because we want to get the metadata that applies to the final file that we'll be uploading. This operation may take a minute or so depending upon file sizes.

The Go! button then executes the remaining functions needed to publish the podcast, returning a status when the process appears to have successfully completed.

With those preliminaries out of the way, let's look at some of the specific tasks that the script performs. I'll mostly skip over housekeeping details associated with setting directory paths and things like that, and focus on the actual automation.

Add intro and outro. Time saved: 5 minutes per episode.

The first thing we do is backup the original file. This is good practice in case something goes awry. It also gives me a copy of the base file to send out for transcription, as I often do.

renameOriginal = FileBase + "_original" + FileExtension

shutil.copy2(filename,renameOriginal)I then concatenate the MP3 file with intro and outro audio. AudioSegment is a pydub function.

baseSegment = AudioSegment.from_mp3(filename)

introSegment = AudioSegment.from_mp3(leadIn)

outroSegment = AudioSegment.from_mp3(leadOut)

completeSegment = introSegment + baseSegment + outroSegment

completeSegment.export(filename,"mp3")The intro and outro are standard audio segments that I use to lead-off and close a podcast. They consist of a short vocal segment combined with a few seconds of music. Adding these by hand would take at least a few minutes and be subject to, say, adding the wrong clip. I also create an OGG version of the podcast that I link to from my blog along with the MP3 file.

Get file metadata. Time saved: 3 minutes per episode.

We new get the file size, time, date, and length, converting it all into the format required for the podcast feed. The size and timestamp come from standard functions. mpeg1audio provides the duration of the MP3 file.

Filelength = path.getsize(filename)

FilelengthStr.set("Filelength (bytes): " + str(Filelength))

timestruc = time.gmtime(path.getmtime(filename))

TimestampEntry.delete(0,END)

TimestampEntry.insert(0,time.strftime("%a, %d %b %G %T",timestruc) + " GMT")

mp3 = mpeg1audio.MPEGAudio(filename)

DurationStr = str(mp3.duration)

DurationLabelStr.set("Duration: " + DurationStr)Build podcast feed XML file. Time saved: 8 minutes per episode.

This is really the big win. It's not even so much the time it takes to fire up a text editor and edit the XML file. It's that I so often get it wrong on the first try. And, because I so often get it wrong on the first try, I feel compelled to run the file through an XML validator before uploading it when I edit it by hand.

Now, in the interests of full disclosure, I should note that the script as written doesn't do anything about characters (such as ampersands) that must be escaped if they appear in a feed. For different reasons, you may also have issues if you cut and paste characters like curly quotes into the Summary edit box. In general, however, I can confidently type the requested information into the GUI and be confident that the feed will be clean.

# create an XML file containing contents for new </item> for iTunes

FileBase, FileExtension = path.splitext(filename)

XMLfilename = FileBase + '.xml'

MP3url = "https://s3.amazonaws.com/"+bucket_name+"/"+path.basename(filename)

inp = file(XMLfilename, 'w')

inp.write("<item>\n")

inp.write("<title>"+PodcastTitleEntry.get()+"</title>\n")

inp.write("<itunes:subtitle>"+PodcastSubtitleEntry.get()+"</itunes:subtitle>\n")

inp.write("<itunes:summary>"+PodcastSummaryText.get(1.0,END)+"</itunes:summary>\n")

inp.write("<enclosure url=\""+MP3url+"\" length=\""+str(Filelength)+"\" type=\"audio/mpeg\" />\n")

inp.write("<guid>"+MP3url+"</guid>\n")

inp.write("<pubDate>"+TimestampEntry.get()+"</pubDate>\n")

inp.write("<itunes:duration>"+DurationStr+"</itunes:duration>\n")

inp.write("<itunes:keywords>cloud</itunes:keywords>\n")

inp.write("<itunes:explicit>no</itunes:explicit>\n")

inp.write("</item>")

inp.write("")

inp.close()

#Now concatenate to make a new itunesxml.xml file

#create backup of existing iTunes XML file in case something goes kaka

iTunesBackup = path.join(theDirname,"itunesxmlbackup.xml")

shutil.copy2(iTunesFile,iTunesBackup)

#create temporary iTunes item list (to overwrite the old one later on)

outfile = file("iTunestemp.xml", 'w')

# create a new items file

with open(XMLfilename) as f:

for line in f:

outfile.write(line)

with open(iTunesItems) as f:

for line in f:

outfile.write(line)

outfile.close()

#replace the old items file with the new one

shutil.copy2("iTunestemp.xml",iTunesItems)

#now we're ready to create the new iTunes File

outfile = file(iTunesFile, 'w')

# create a new items file

with open(iTunesHeader) as f:

for line in f:

outfile.write(line)

with open(iTunesItems) as f:

for line in f:

outfile.write(line)

outfile.close() Upload to AWS S3. Time saved: 5 minutes per episode.

We have the modified audio files, and we have the feed file—it's time to put them where the world can listen to them. I use boto to connect with AWS S3 and upload the files.

It's pretty straightforward. You make the connection to S3. In this script, AWS credentials are assumed stored in your environment. The current version of boto, boto3, provides a number of alternative ways to handle credentials. The files are then uploaded and made public.

If you're trying out automation with an existing podcast, you're probably better off giving your feed file a name that doesn't conflict with your existing feed and upload your files as private. This gives you the opportunity to manually check that everything went OK before going live. That's what I did at first. Over time, I tweaked things and gained confidence that I could just fire and (mostly) forget.

I often still give a quick look to confirm that there are no issues, but, honestly, problems are rare these days. And, if I were to take my own advice, I would take the time to fix a couple remaining potential glitches that I know about—specifically, validating and cleansing input.

# Upload files to Amazon S3

# Change 'public-read' to 'private' if you want to manually set ACLs

conn = boto.connect_s3()

bucket = conn.get_bucket(bucket_name)

k = Key(bucket)

k.key = path.basename(filename)

k.set_contents_from_filename(filename)

k.set_canned_acl('public-read')

k.key = path.basename(iTunesFile)

k.set_contents_from_filename(iTunesFile)

k.set_canned_acl('public-read')Time saved

So where does this leave us? If I total up my estimated time savings, I come up with 21 minutes per episode. Sure, it still takes me a few minutes, but most of that is describing the episode in text and that needs to be done anyway. Even if we assign a less generous 15 minutes of savings per episode, that's been a good 1,500 minutes—25 hours—that I've saved over my 100 or so podcasts by spending a day or so writing a script.

But, honestly, I'm not sure that even that time figure captures the reality. Fiddly, repetitive tasks break up the day and consume energy. Automating everything doesn't make sense. But, usually, if you take the plunge to automate something that you do frequently, you won't regret it.

1 Comment