One benefit of moving to an API-based architecture is that you can iterate quickly and deploy new changes to our services. There is also the concept of traffic and routing established with an API gateway for the modernized part of the architecture. API gateway provides stages to allow you to have multiple deployed APIs behind the same gateway and is capable of in-place updates with no downtime. Using an API gateway enables you to leverage the service's numerous API management features, such as authentication, rate throttling, observability, multiple API versioning, and stage deployment management (deploying an API in multiple stages such as dev, test, stage, and prod).

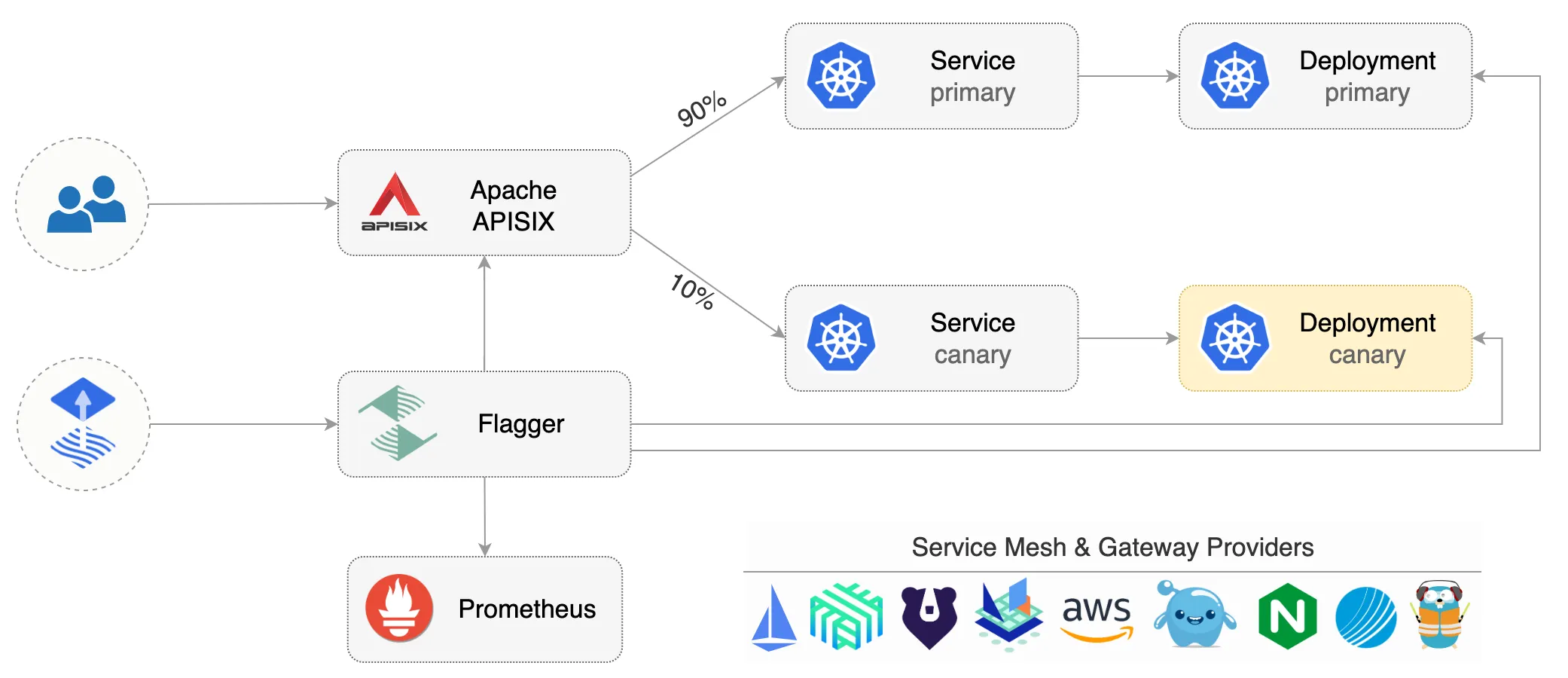

Open source API gateway (Apache APISIX and Traefik) and service mesh (Istio and Linkerd) solutions are capable of doing traffic splitting and implementing functionalities like canary release and blue green deployment. With canary testing, you can make a critical examination of a new release of an API by selecting only a small portion of your user base.

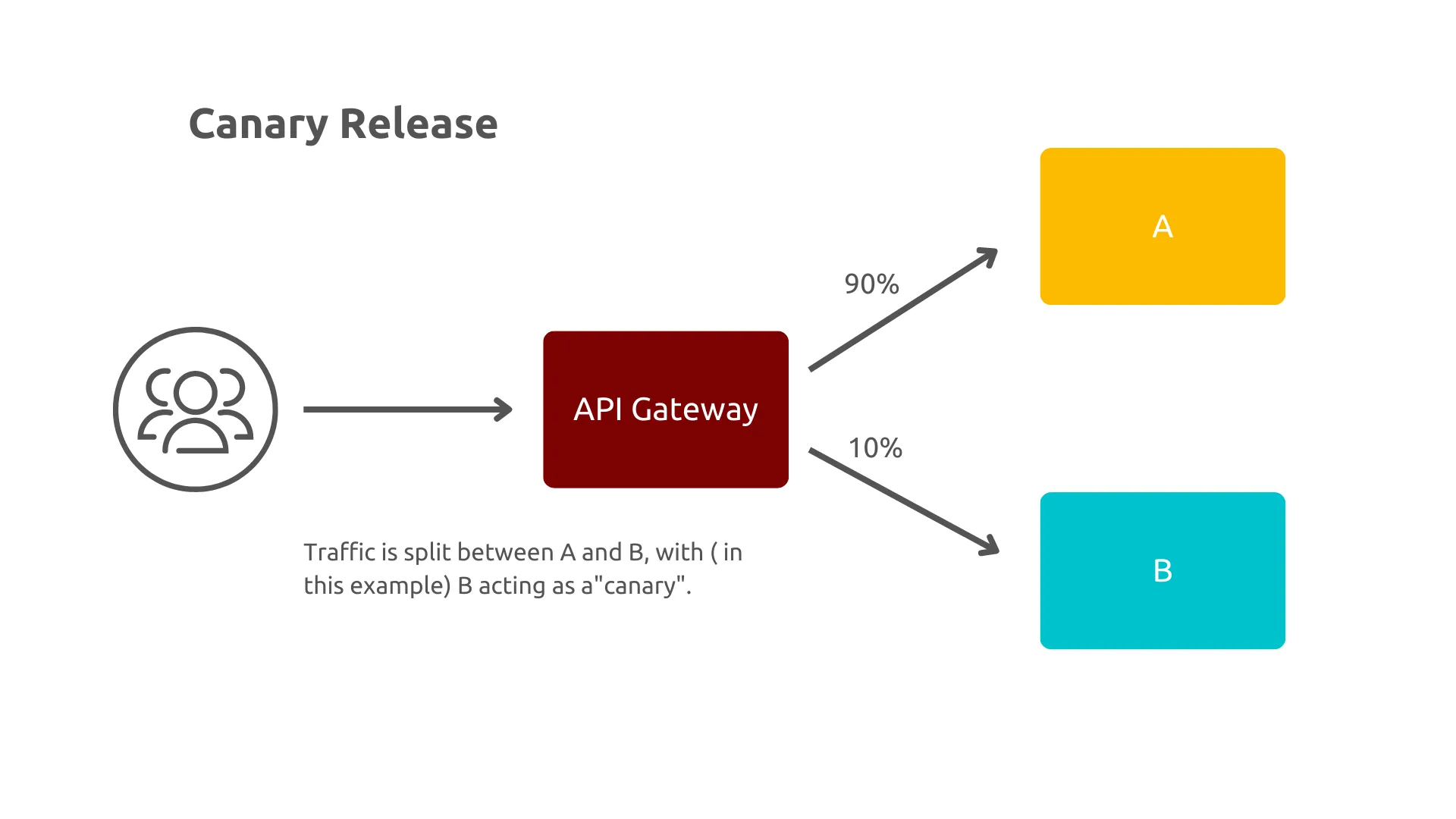

What is a canary release?

A canary release introduces a new version of the API and flows a small percentage of the traffic to the canary. In API gateways, traffic splitting makes it possible to gradually shift or migrate traffic from one version of a target service to another. For example, a new version, v1.1, of a service can be deployed alongside the original, v1.0. Traffic shifting enables you to canary test or release your new service by at first only routing a small percentage of user traffic, say 1%, to v1.1, then shifting all of your traffic over time to the new service.

(Bobur Umurzokov, CC BY-SA 4.0)

This allows you to monitor the new service, look for technical problems, such as increased latency or error rates, and look for the desired business impact. This includes checking for an increase in key performance indicators like customer conversion ratio or the average shopping checkout value. Traffic splitting enables you to run A/B or multivariate tests by dividing traffic destined for a target service between multiple versions of the service. For example, you can split traffic 50/50 across your v1.0 and v1.1 of the target service and see which performs better over a specific period of time. Learn more about the traffic split feature in Apache APISIX Ingress Controller.

When appropriate, canary releases are an excellent option, as the percentage of traffic exposed to the canary is highly controlled. The trade-off is that the system must have good monitoring in place to be able to quickly identify an issue and roll back if necessary (which can be automated). This guide shows you how to use Apache APISIX and Flagger to quickly implement a canary release solution.

(Bobur Umurzokov, CC BY-SA 4.0)

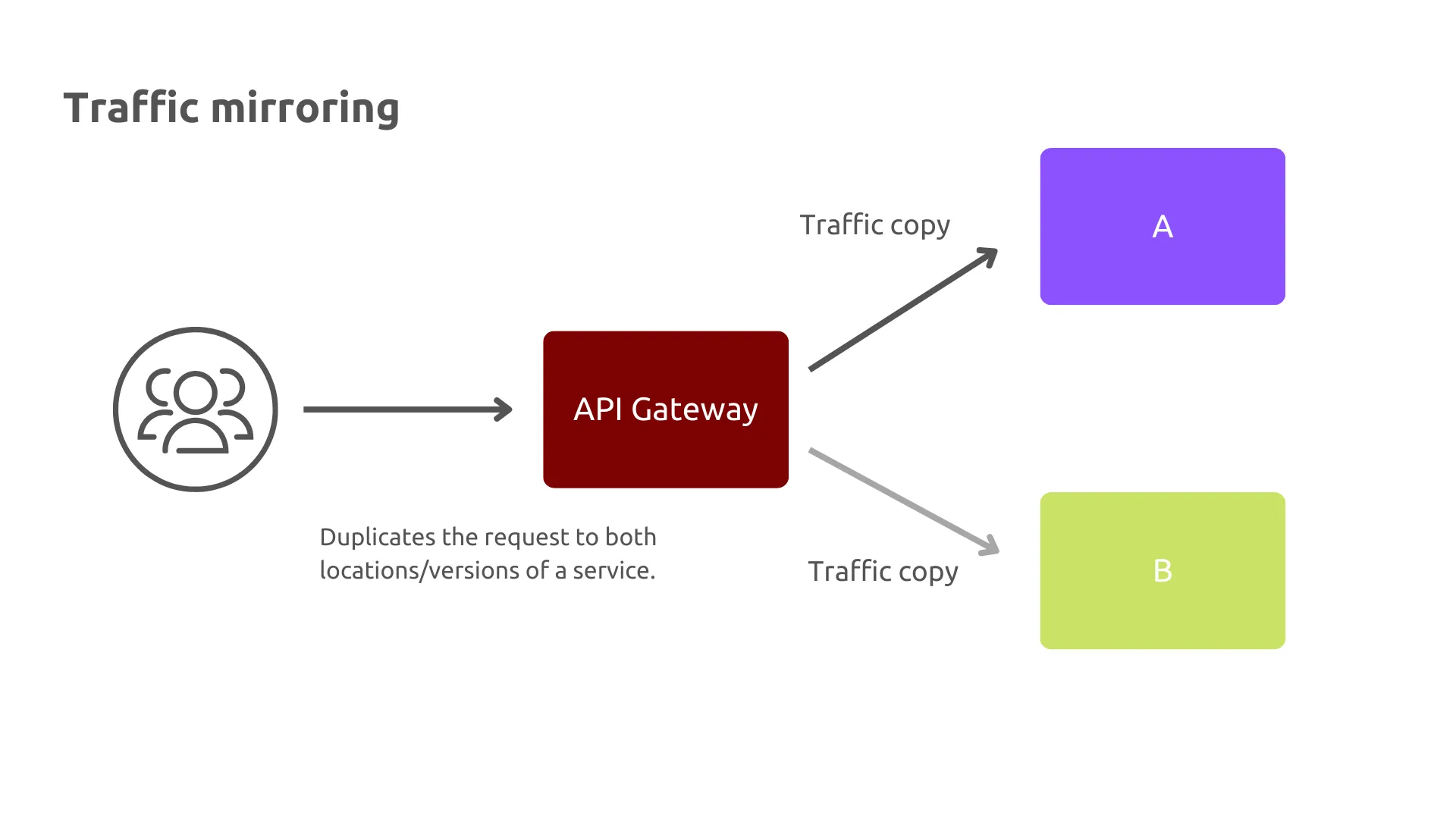

Traffic mirroring

In addition to using traffic splitting to run experiments, you can also use traffic mirroring to copy or duplicate traffic. You can send this to an additional location or a series of locations. Frequently with traffic mirroring, the results of the duplicated requests are not returned to the calling service or end user. Instead, the responses are evaluated out-of-band for correctness. For instance, it compares the results generated by a refactored and existing service.

(Bobur Umurzokov, CC BY-SA 4.0)

Using traffic mirroring enables you to "dark release" services, where a user is kept in the dark about the new release, but you can internally observe for the required effect.

Implementing traffic mirroring at the edge of systems has become increasingly popular over the years. APISIX offers the proxy-mirror plugin to mirror client requests. It duplicates the real online traffic to the mirroring service and enables specific analysis of the online traffic or request content without interrupting the online service.

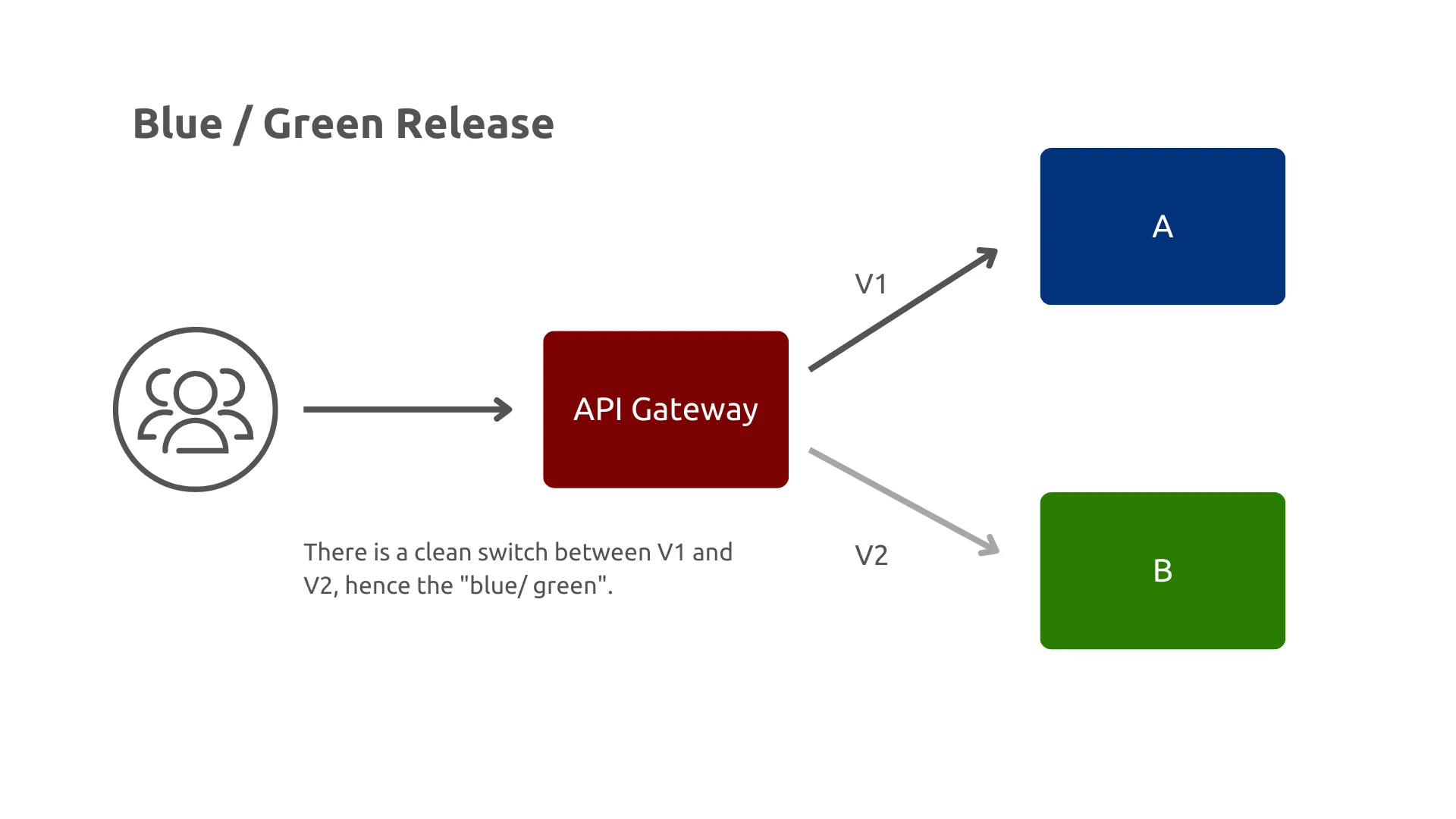

What is a blue green deployment?

Blue green deployment is usually implemented at a point in the architecture that uses a router, gateway, or load balancer. Behind this sits a complete blue environment and a green environment. The current blue environment represents the current live environment, and the green environment represents the next version of the stack. The green environment is checked prior to switching to live traffic. When it goes live, the traffic is flipped over from blue to green. The blue environment is now off, but if a problem is spotted the rollback is quick. The next change is to go from green to blue, oscillating from the first release onward.

(Bobur Umurzokov, CC BY-SA 4.0)

Blue green works well due to its simplicity, and it is one of the better deployment options for coupled services. It is also easier to manage persisting services, though you still need to be careful in the event of a rollback. It also requires double the number of resources to be able to run cold in parallel to the currently active environment.

Traffic management with Argo Rollouts

The strategies discussed add a lot of value, but the rollout itself is a task that you would not want to have to manage manually. This is where a tool such as Argo Rollouts is valuable for demonstrating some of the concerns discussed.

Using Argo, it is possible to define a Rollout CRD that represents the strategy you can take for rolling out a new canary of your API. A custom resource definition (CRD) allows Argo to extend the Kubernetes API to support rollout behavior. CRDs are a popular pattern with Kubernetes. They allow the user to interact with one API with the extension to support different features.

You can use the Apache APISIX and Apache APISIX Ingress Controller for traffic management with Argo Rollouts. This guide shows you how to integrate ApisixRoute with Argo Rollouts using it as a weighted round-robin load balancer.

Summary

The ability to separate the deployment and release of service (and corresponding API) is a powerful technique, especially with the rise in the progressive delivery approach. A canary release service can make use of the API gateway traffic split and mirroring features, and provides a competitive advantage. This helps your business with both mitigating risk of a bad release and also understanding your customer's requirements.

This article was originally published on the API7.ai blog and has been republished with permission.

Comments are closed.