Continuous integration (CI) and continuous delivery (CD) are usually associated with DevOps, DevSecOps, artificial intelligence for IT operations (AIOps), GitOps, and more. It's not enough to just say you're doing CI and CD; there are certain best practices that, if used well and consistently, will make your CI/CD pipelines more successful.

The phrase "best practices" suggests the steps, processes, ways, iterations, etc. that should be implemented or executed to get the best results out of something like software or product delivery. In CI/CD, it also includes the way monitoring is configured to support design and deployment.

A deployment pipeline is a good example of this. It includes the steps to build, test, and deploy:

- Application software

- Systems, servers, and devices

- Configuration updates (e.g., firewalls)

- Anything else

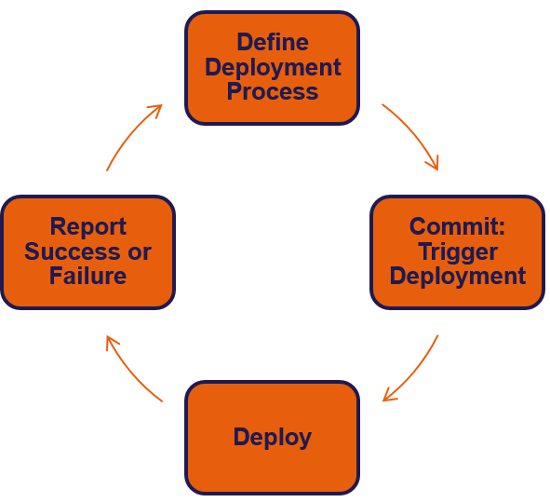

Its purpose is to automate deployment by:

- Using a tool to automate individual tasks, resulting in a string of automated tasks (a toolchain)

- Using a tool to orchestrate the whole process or toolchain

- Continually expanding and improving the toolchain

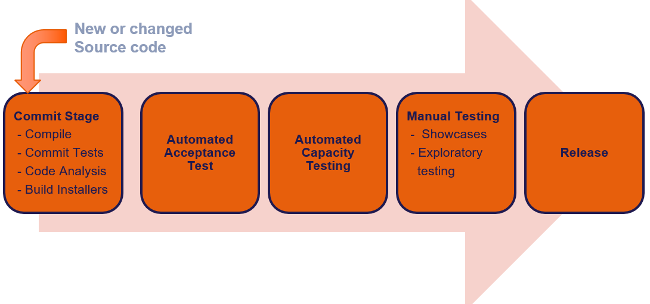

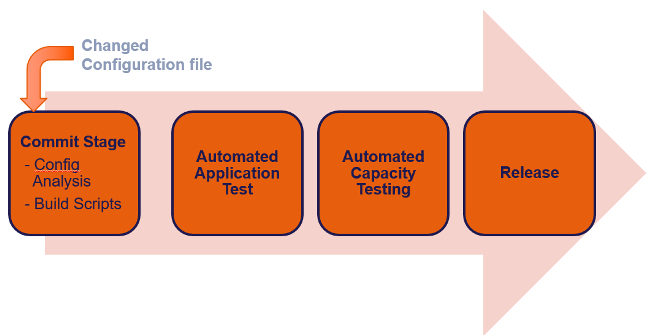

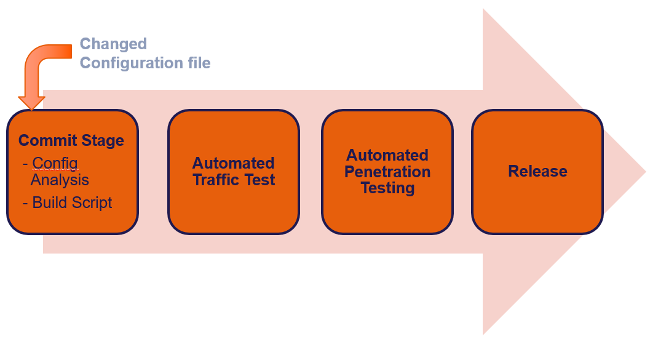

The following are examples of different deployment pipelines.

Applications deployment pipeline

(Taz Brown, CC BY-SA 4.0)

Server configuration deployment pipeline

(Taz Brown, CC BY-SA 4.0)

Firewall configuration deployment pipeline

(Taz Brown, CC BY-SA 4.0)

Another example of CI enabling pipeline deployment and continuous delivery is database CI; database changes tend to complicate CI, but this can be simplified with database continuous integration (DBCI) using an agile cross-functional team that includes a database administrator, using "database as code," and refactoring databases using object-oriented (OO) principles.

There are a variety of best practices in CI/CD that will improve your workflow and outcomes. In this article, I will explore some of them.

Pipelines and tools

CI/CD best practices involve the combination of an effective, efficient deployment pipeline and orchestration tools, such as Jenkins, GitLab, and Azure Pipelines. The benefits of these include increased velocity, reduced risk of human error, and enabling self-service.

Taz Brown, CC BY-SA 4.0

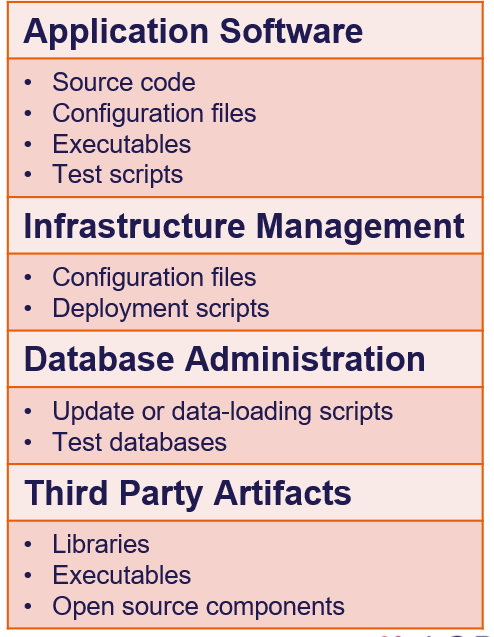

Version control

Using shared version control is a best practice that provides a single source of truth for all teams (e.g., development, quality assurance [QA], information security, operations, etc.) and puts all artifacts in one repository. Version control must also be secure, utilizing standards such as encrypted repositories, signed check-ins, and separation of duties (e.g., two people required to commit). Some things you could expect in version control include:

Taz Brown, CC BY-SA 4.0

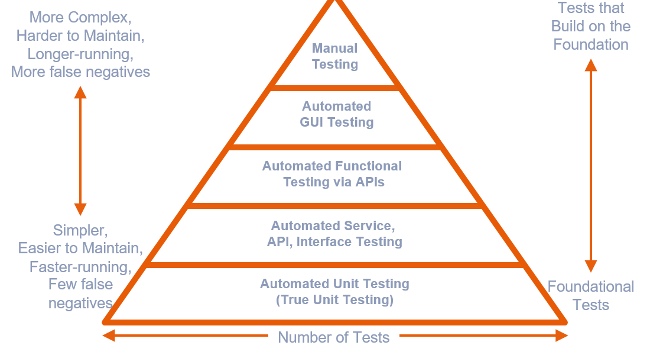

QA and testing

CI/CD best practices extend to quality management, as well. Deployment pipelines must test, test, test, and test some more to mitigate defects with a sense of urgency and an eye on speed to recovery. Put your focus on quality-related principles, such as:

- "Shift left" testing

- Small batches, frequent releases, learning from escaped defects

- Five layers of security testing

- Software vs. infrastructure testing

Best practices demand that testing be automated to detect issues faster—and mitigate them even faster. Some tests include:

Taz Brown, CC BY-SA 4.0

Testing should include code, particularly code analysis and automated testing tools.

Common code-analysis tools include:

- Language-specific

– Python: Pylint & Pyflakes

– Java: PMD, Checkstyle

– C++: Cppcheck, Clang-Tidy

– PHP: PHP_CodeSniffer, PHPMD

– Ruby: RuboCop, Reek - Non-language specific

– SonarQube: 20+ languages

– Security scanners

– Complexity analyzers

Common automated testing tools include:

- Unit testing

– JUnit, NUnit - Functional or performance testing

– HP ALM

– Cucumber

– Selenium - Security testing

– Penetration test tools

– Active analysis tools

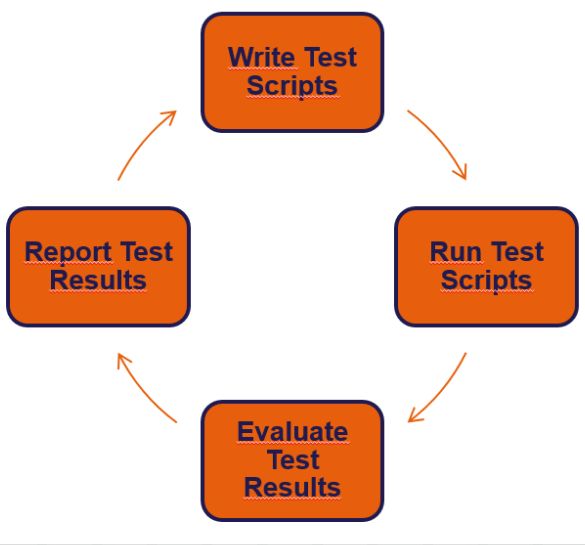

Taz Brown, CC BY-SA 4.0

Testing your own processes

Teams should be persistent in doing CI, and you can check how well your team is doing by answering the following questions:

- Do developers check into the trunk at least once a day?

- Does every check-in trigger an automated build and testing (both unit and regression)?

- If the build is broken or tests fail, is the problem solved in a few minutes?

Metrics, monitoring, alerting

All of the following should be automated and continuous in a CI/CD pipeline:

- Metrics are data that can be captured and are useful for detecting events (changes in the state of something relevant) and understanding the current state and history.

- Monitoring involves watching metrics to identify events that are of interest.

- Alerting notifies people when an event requires their action.

Incorporating telemetry, a "highly automated communications process by which measurements are made and other data collected at remote or inaccessible points and transmitted to receiving equipment for monitoring, display, and recording," is one option to build continuous metrics, monitoring, and alerting into your CI/CD pipelines.

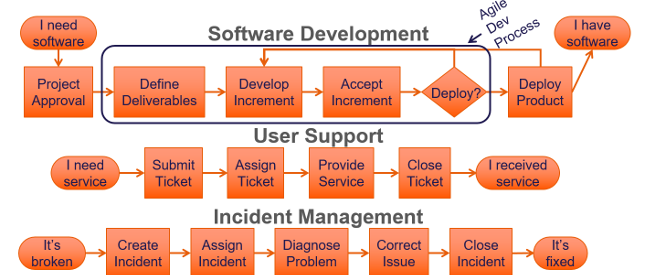

Value stream and value-stream mapping

Identifying value streams and then mapping them can help an organization and teams when trying to solve problems. It also helps establish value for customers through activities that take a product or service from its beginning all the way through to the customer. Value stream examples include:

- Software development

- User support

- Incident management

Taz Brown, CC BY-SA 4.0

Infrastructure as code

Treating infrastructure as code (IaC) is a best practice that has many benefits for CI and CD, such as:

- Providing visibility about application-infrastructure dependencies

- Allowing testing and staging in production-like environments earlier in dev projects that enhance agile teams' definition of "done"

- Understanding that infrastructure is easier to build than to repair

– Physical systems: Wipe and reconfigure

– Virtual and cloud: Destroy and recreate (e.g., Netflix's Doctor Monkey) - Complying with the ideal continuity plan, which according to Adam Jacob, CTO at Chef, "enable[s] the reconstruction of the business from nothing but a source code repository, an application data backup, and bare-metal resources"

Amplify feedback

Building in the ability to amplify feedback is another CI/CD best practice. One system to work safely within complex systems is:

- Manage in a way that reveals errors in design and operation

- Swarm and resolve problems resulting in quick construction of new knowledge

- Make new local knowledge explicit globally throughout the organization

- Create leaders who continue these conditions

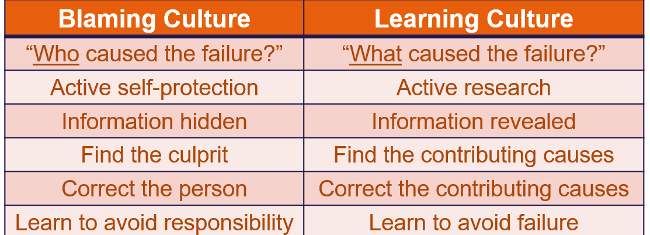

Response to failure

Best practices around responding to failure should be built into a build, test, deploy, analysis, and design system. This is not only about considering which failure-response systems should be designed, built, and deployed; the ways organizations or teams will respond should also be well thought out and executed.

The ideal culture considers failures to be features and an opportunity to learn, rather than a way to place blame. Blameless retrospectives or postmortems are a best practice that allows teams to focus on facts, consider many perspectives, assign action items, and learn.

Creating a culture of learning and innovation around continuous integration and delivery is another best practice that will result in continuous experimentation and improvement in the short term and sustainability, reliability, and stability in the long run.

Taz Brown, CC BY-SA 4.0

Leaders can create the necessary conditions for this cultural shift in a number of ways, including:

- Make postmortems blameless

- Value learning and problem-solving

- Set strategic improvement goals

- Teach and coach team members in:

– Problem-solving skills

– Experimentation techniques

– Practicing failures

Best practices involve winning and losing, failing fast, removing barriers, fine-tuning systems, breaking complex software by using popular tools, and, more importantly, driving people to respond in ways that solve problems fast, are decisive, and are creative and innovative.

It's about creating a culture where people expect more. It's about shifting your mindset to deliver on what's promised and deliver value in ways that delight.

Summary

Building out a CI/CD pipeline is a test of resiliency, persistence, drive, and fortitude to automate when it's called for and pull back when it's not. It's about being able to make a determination because it's in the best interest of your systems, dependencies, teams, customers, and organizations.

Comments are closed.