Scientific software tools have long lived in the conflict zone between open source ideals and proprietary exploitation. The values of science (openness, transparency, and free exchange) are at odds with the desires of individuals and organizations to transition scientific tools to a commercial product. This has been a problem in neuropsychology and neuroscience for decades, and extends outside the bounds of software.

Typically, a psychological test (for example, a set of questions used to identify a personality trait) may have been developed over a series of experiments to produce a coherent, sensitive, and a valid predictor of the trait. The questions themselves are often not useful unless these psychometric properties have been assessed using a large sample of people, a process that typically involves sponsored research from a federal agency. The norms may be published and can be used as a reference to anyone with access to them, but the test itself is intellectual property owned by a researcher, university, or other organization that developed it. This creates a conflict whereby public funding is used to develop tests that may only be available commercially, creating a publicly-subsidized system of rent-collectors and sharecroppers.

Many tests live in an area of benign neglect where they are treated as 'open source', but they really are not. One well-known example of a test is the Mini-Mental State Exam (MMSE), introduced by Folstein et al. in 1975. Physicians, psychologists, and psychiatrists found it incredibly useful: a fast (5-minute), free test that could reliably tell if someone was suffering cognitive impairment (perhaps because of a stroke or brain injury). It was widely used, copied, and redistributed for decades, was the subject of thousands of follow-up research studies, and became the de facto standard for screening.

However, since becoming widespread, the original authors licensed the test to a company that requires a small usage fee per test (see Newman and Feldman, 2011). The value of the test is derived as much from the community that has used and studied the test for the past decades as from the original research developing the test, yet the test is now protected, and efforts to develop a replacement test (see Fong's Sweet 16) have been accused of copyright violations by the MMSE copyright holders.

One solution to these problems is for researchers to develop and promote open source testing. But developing open source psychological tests face a number obstacles. These include: competition from tests that are now free but may not always be; the reluctance of clinicians and researchers to use new tests without available norms; the difficulty in obtaining funding for developing norms for work-alike tests; the threat of legal action against work-alike tests; and even the difficulty in publishing those norms in journals that look at the research as not novel enough.

Unfortunately, the costs of this are substantial, and are borne by the health care system (which must pay licenses for clinical tests) or by the federal agencies who fund research grants that purchase licenses to use those tests. My own research efforts have the aim of improving this situation, by bringing open source software to the psychology community.

Ten years ago, I began developing an open source software platform for psychological and neuroscience tests and experiments, called the Psychology Experiment Building Language (PEBL). PEBL was only possible because it leveraged a number of other open source libraries, including the gnu cpp compiler, GNU Bison, and FLEX, the excellent SDL gaming libraries (including sdl_image, sdl_ttf, sdl_net and the third-party sdl_gfx, and waave libraries), and other open source tools for developing image and sound stimuli (GIMP, Inkscape, and Audacity).

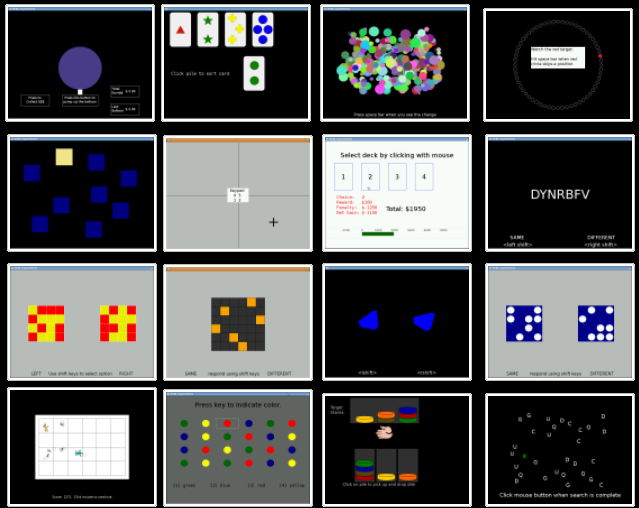

As part of the PEBL system, we distribute a test battery that includes close to 80 cognitive and behavioral tests. These tests implement experimental and clinical paradigms, some of which date back over 100 years, and include tests that work similar to a number of commercially available tests costing hundreds or thousands of dollars. These tests tend to resemble games more than the question-based personality or screening tests like the MMSE, and so the intellectual property laws that apply to games and software are most relevant: the the logic and mechanics of a game or test can be patented (and protected for only limited time) whereas the expression (questions, images, sounds) are covered under copyright law which has a much longer period of protection. However, copyright only covers their expression, and so new imagery, sounds, and stimuli can often be created without infringing on copyright. In addition, PEBL avoids the use of protected trademarks, and do not implement patented testing protocols. Luckily, only a few of our tests have commercial competitors, and along with being free, many of the tests we distribute are technical improvements over their alternatives. Yet, there are hundreds of tests that we cannot implement because doing so would certainly violate copyright or patent law.

PEBL has brought open source tools to many scientists and clinicians in the US and abroad who are not only grateful, they are often surprised that the tests are legal. Because of aggressive protection of copyrighted material and a guild-like mentality of clinical practitioners, many clinicians are under the impression that it is wrong to use such tests. They often believe someone else owns the test and has exclusive rights to distribute it. This impression is only sometimes correct. The truth is, our entire society truly owns the knowledge embodied in many psychological tests, and we should be all be able to use it freely. PEBL is an attempt to help improve this access.

References

Folstein, M. F., Folstein, S. E., & McHugh, P. R. (1975). Mini-Mental State. A practical method for grading the cognitive state of patients forthe clinician. Journal of psychiatric research, 12(3), 189-198.

Fong TG, Jones RN, Rudolph JL, et al. Development and Validation of a Brief Cognitive Assessment Tool: The Sweet 16. Arch Intern Med. 2011;171(5):432-437. doi:10.1001/archinternmed. 2010.423.

Newman, J. C. & Feldman, R. (2011). Copyright and Open Access at the bedside. New England Journal of Medicine.

1 Comment